How would you judge the quality of the testing process within any application development? In most cases, the application will eventually get into production whatever the test regime, but the quality of testing will more directly affect the time it takes to develop and test the application, the number of people required, the volume of change requests that were initiated during the project and the number of problems encountered after the application was deployed to production. Test quality matters.

Regardless on how complex the system is, or however many people are assigned to the testing activities; the quality of testing is determined by the preparation, by which I mean the actual planning and preparation of each test stage. To make this preparation effective, the governance of any IT project must involve the testing team from the beginning of the project.

Testing teams have a strong role in the initial governance process in the project initiation stage. Much of this work is done before development team’s start on the delivery of the application. Because testers have the mind-set and training to find inconsistencies between the business requirements and the specification of the application, they are well paced to do static document review; to identify obvious gaps in the specification of the requirements, to check for inconsistencies in the requirement documents and models, and to understand the requirements and solution early enough to prepare effective testing strategies and test approach. Testers can also assist with estimating and quantifying project timings and resource requirements by bringing their experience and knowledge of the test process. The test plan needs to be assembled and documented, and agreed with both business and technical teams.

In most large development projects, the testers are brought in to the team far too late. In one banking project that I was involved with, the testing team was brought just before the design of the project was completed.

This gave very little time to prepare for the testing cycle that involved the system tests, integration tests, user acceptance tests, operational acceptance tests, and disaster recovery test. The system was supposed to ‘go live’ within six months, but instead it took more than a year to complete testing. When it went live, it did so without us having the time to conduct operation acceptance or disaster recovery test. So, what went wrong? The testing team joined the project after the requirements had been signed off. When they arrived, the design and build process was under way. Without expert input, management had under-estimated the scope of testing and the resources that would be required. Throughout development, there was constant demand from the business for change requests, and the test team was overwhelmed with the task of identifying and documenting gaps in the solution in addition to plain bugs.

When faced with this unexpected task of having to identify the many areas where the application failed to meet the needs of the business, I had to reevaluate the entire test approach. Just to complete the functional test phases (System, System Integration and User Acceptance Test) and to gain business sign-off, we had to increase the testing resource by pulling in more business representatives. We also had had to make the difficult choice of reducing the test coverage. This meant agreeing with the business which was the critical functionalities that had to be verified before the system under test could be moved to production. This meant that we then had to gain agreement with the key business stakeholders that we would test other areas such as Disaster Recovery within a specific time frame after going live.

This could have been avoided if the testing team were assembled and represented as part of the project initiation stage. This would have allowed the testers to warn of impending problems before they became expensive mistakes. In this case, they would have been in a position to identify the mismatches between the application specifications and the business requirements before development started.

Another testing project I also managed was an SAP Global Template (GT) for a Biochemical organization. I was consulted as a testing subject-matter expert, providing testing frameworks, governance, control, and strategic process to the entire team. The project had several layers of delivery teams from an implementation partner who was responsible for core SAP modules, several other third party suppliers’ who were responsible for additional solutions such as vendor invoice management and printing barcode labels and on top of that, the inevitable legacy applications that needed to be integrated with the global template applications. The GT application, 3rd party applications and legacy systems were all passed through Quality Assurance (QA) in a test environment before promoting the system under test to production.

As part of my approach, I established a core testing team that was staffed with a mixture of business and technical people. With this team, I conducted a static review of requirements and identified potiental gaps between the planned functionality and business requirements before we started creating the test cases. Although this approach was helpful in identifying test conditions and coverage, and allowed developers to bridge the gaps, it was not by itself sufficient preparation. For preparing the individual test cases, and for mapping out the test conditions and coverage, we used a wide range of test-design techniques. These included a mix of black-box, white-box, experience and risk-based approach to ensure there was adequate test coverage. Each of these test design techniques were concurrently used for each test phase.

Successfully implementing Quality Testing

The testing cycle that we adopted was incremental, even though the project was based on a V -Model delivery approach; by doing this, we were able to concentrate our testing efforts in stages as development was completed while giving us the flexibility to change aspects of the initial design in response to the wishes of the business during system integration. By the time we got into User-acceptance testing (UAT), the business was adequately aware how well the solution matched the requirements.

Once test execution started, we produced a daily testing report that highlighted the test execution plan against the actual result in terms of the defects logged, assigned, fixed, retested, and closed. This was produced for each test phase. We then released a final Test-Closure report which was used to sign-off the current test phase before we moved on to the next.

This SAP Global Template (GT) Project was a Green-field implementation within the organization, rather than a replacement. This affected the way we needed to approach testing. As part of my overall test approach and the deployment release plan, the initial release testing was a manual process, and then the subsequent releases were a mixture of manual and automated test.

It is always more efficient to automate tests that must be re-run, but I opted for this more manual approach mainly because the solution was new to the organization and we were able to contribute to the training process of the organization by also performing the manual tests at the beginning. It also increased confidence within the business that the solution met their requirements before moving it to production.

In my experience, having a mixture of manual and automation test is most effective but it is important to judge, at any particular point in the test process lifecycle, whether a manual or automated test is more appropriate. There are various aspects to this decision: Automation testing is useful if your team or business that will eventually be using the application processes does not require an end-to-end training and if the system under test is not entirely a new development. Although automation is also the most effective and efficient way to conduct regression testing when a previous change release has gone through other test phases, for example, system integration and user acceptance. The new change being introduced is first tested in a QA environment before being promoted to coexist with other systems in an environment that is most similar to production, called ‘Pre-Production’ or ‘Pre-Live’. The release is then verified in its wider context before it is promoted to production as part of ‘business as usual’ activity.

Six Major Steps to Consider when Implementing an End-to-End Testing Process

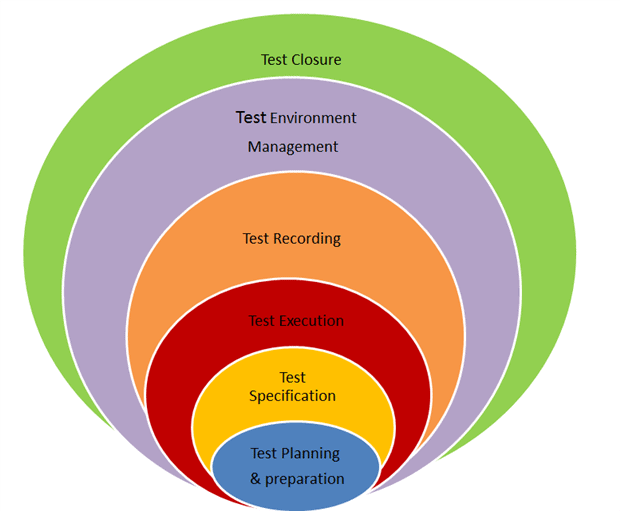

Similar to a project management process, quality testing has to be implemented through effective planning, coordination, execution, control, reporting and closure of each test phase.

During each test phase, the below six interchangeable defined testing process stages are required in order to initiate, manage, control, monitor and report testing activities.

Test Planning/Preparation

All preparatory works are conducted in this stage. The preparatory works include:

- reviewing project documentation

- gathering test requirements from business requirements, design and build solution documents

- creating test documentation such as;

- test strategy

- test plan

- test conditions/scenarios

- test cases

- test scripts/steps

As a Test Manager and a QA consultant, this is the first stage. It will set the precedence of who, what, how and when things will be done during testing. A test strategy provides the direction and approach for performing the various testing phases. In most cases depending on the complexity of the solution under test a separate test approach document is written for each test phase to show granuality on each test phase. The test strategy outlines what is in scope, out of scope and how a test plan will be generated. It could also provide the order in which the test plan is executed: either in a sequential order or on an ad hoc basis. Test plans are typically created from two angles:

- Requirement documentation: this provides clear description of what the business requirements are and what exactly is the business objectives including what the business hope to achieve. This is important in order to clearly define the overall success criteria that the business will use to measure the exit criteria during user acceptance testing phase.

- Solution documentation: this is typically used by all the teams within the project to verify that the system architecture and system integration were fit for purpose and were not detrimental to the existing IT infrastructure.

A test approach expands further on what was documented in the test strategy. For instance, it will detail how testing activities will be carried out, and mention whether there will be possible manual or automated tests included. During this process, a testing team will also conduct static document reviews. This aims to identify any bugs, inconsistencies or defects before the build of an application.

Test Specification

This process stage is usually a combination of the previous early stage planning/preparation step because it makes use of the defined test strategy and approach as the basis for defining the sort of test design techniques to adopt for a test phase. The test analysis and design activity is a critical process in a testing life cycle. It enables the testing team to identify the following;

- test requirements:

- test coverage,

- test conditions, test cases, and

- Test procedures (scripts).

A testing team will typically review requirements and solution design documents to identify what sort of test phases to conduct. When performing test analysis, a testing team checks the test basis (requirements) to see if all the required documents are detailed and accurate enough to be able to derive the test conditions from them. The development of the Test Execution Plan helps to document how and when the test cases and procedures (scripts or steps) are to be run. During the test specification process, a testing team could utilize any or more of the several test design techniques to formulate their test cases. The techniques may include; static reviews, equivalence partitioning, user story, experience-based, risk-based, structured based or defect clustering to determine the coverage and prioritization of testing effort. The test plan will describe the work required to carry out the test according to the priority per the planned amount of effort. Once test cases and scripts are created, the testing team should ensure traceability between test and requirements are always maintained from the testing tool. There are various testing tools available in the market today that you can use during test preparation, managing, execution and reporting.

Test Environment Management

This is a crucial element of the test management process. It is one of the areas that often gets forgotten or left too late till the actual test execution is about to start. During test preparation, writing test cases and execution plans, we must identify, incorporate or create all the materials and system requirements that would enable the system under test to be validated properly. It includes the master data, reference data, system access, and application interfaces. Ideally, this process should be part of the planning and preparation test stage. As part of the preparation of the test environment, the test management team would work with technical teams to ensure the necessary data types that are required are loaded in the appropriate environment. Types of data used are usually any or combination of these three;

- Manual data -we, while testing, will manually input information or records in testable fields, thereby formatting a manual collection of data log or self inputted data sets by a tester.

- Scrambled, masked data sets – Testers would request such data set from the technical teams, this is a cut of production like data which are scrambled up in order to protect the real owner of the data. This is usually done to meet data protection act.

- Real production data – this is loaded from production as it exists without any scrambling or masked activities. The use of any of above type of data is usually subject to the type of testing requirement or scope identified during the preparation and specification stages.

Most of the testing projects that I have managed require a QA, Pre-Prod and Production environment in order to validate and enhance the overall quality of the system under test before promoting into a live environment. There is usually a need for a separate environment for business as usual (BAU) test validation and verification activities.

Most of the organizations for which I have worked at as a test manager tend to base their setting up of environment on the complexity of the system landscape, demand, capability & resource management between projects and continuous Business As Usual (BAU) activities.

Here are some of the typical types of environments and their usage.

- Development Environment (Dev.) -This is a developer’s environment where design and build activities are carried out and unit tested before being promoted to an actual test environment.

- Sandbox Environment – Testers and developers or project team members have such an environment for training purposes. Just as the name implies, it is a playground area to try several development or testing techniques to build confidence before moving further to a dev. Or QA environment.

- QA Test Environment – This is the initial environment to which systems, or applications that are under test, would be promoted after development work is complete. In this environment we carry out the individual components of the test as well as integrated systems verification. Testers use a QA environment to verify and validate the deployed solution. Here testers have an essential role in building confidence in the system under test before it is introduced to the business or end users.

- Pre-Production Environment – A pre-Production environment is usually required for performance test, User Acceptance, Regression and Operational Acceptance test. This is because an environment which has similar infrastructure, master, reference data & transactional data sets; fully integrated systems and application is required to effectively verify the solution before deployment into production an. It is expensive to set more than one Pre-Production environment, so the QA environment is merely used to validate the developed system in isolation rather than within the context of the organization at large. We also use Pre-Production as the final check points for ‘Business-as-usual’ releases before production deployment.

- Disaster Recovery Environment (DR) – An environment dedicated to run disaster recovery test to validate the disaster recovery plan, has to be able to realistically simulate potential or system failures that are capable of resulting in possible fallout. More and more organizations are not able to effectively establish a fully functional DR test environment because of the huge cost of maintaining such an environment in terms of staff and infrastructure resources. Where there is a gap, the Pre-Production environment can be used.

- Production Environment – This is a real live environment with the real transactional flow of data, real customers carrying out day to day operational business service activities. When there are some potential critical hot fixes, these could be applied directly into production, but a retrofit on other environment should be performed.

- Business as Usual (BAU) Environment – A dedicated Business as Usual test environment separate from project codes or releases testing is required to test production like changes that the business users have requested. The use of the BAU test environment needs to be carefully planned in conjunction with other project team members like a release or change or an environment manager who will ideally organize the delivery of various BAU application releases based on the development team planning schedule.

Test Execution

Test execution is the action of running the test cases and procedures (scripts or steps) in accordance with the test execution schedule(s). It involves analyzing the results, comparing the actual results with the expected results, logging the results, and raising defect reports. This will help to demonstrate that the product is able to satisfy the stated requirements: The requirements are specified in the objectives of the test cycle or level as defined in the test plan. As part of Test Execution, we need to monitor the defect management process, re-test fixes and do regression tests. As part of these activities, we have to update the execution schedule(s), traceability matrix, and test plan(s).

Test Recording

There are different styles of producing test reports for different audiences. The Testing team is able to provide some useful metrics that could indicate the direction of development progress by regularly reporting:

- Number of planned vs. executed test cases

- Number of defects found

- Number of each severity defect found

- Number of defects by status for example New, Assigned, Fixed, Rejected or Closed

- Number of defects found in each testing phase

- Number of each severity defect found in each phase

- Number of defects detected/time period, per severity & per tester

As a test manager, I am determined to find potential defects in test before they get into the production application. Whether you are using Excel, a testing tool or application lifecycle management (ALM) tool, there are certain common statuses and sub-statuses listed below that provide clear indication on how to categorize defects, this I find helps developers and project teams to fully understand the types of issues associated to the system under-test.

| Status | Sub-Status | Description |

| Assigned | Deferred | Defect has been assigned but any work to correct the defect has been deferred |

| Work in Progress (WIP) | Assigned resource has received the defect and is currently working on it | |

| Rejected | Duplicate | Defect has been investigated and is discovered to be a duplicate of one previously raised |

| Functions as Designed | Defect is deemed to Function as it was intended to (or as designed) | |

| Text Case/Test Script/Test Data Error | There appears to be an error with the Test script or the associated test data | |

| Not a Defect | Defect is deemed to not be a defect | |

| Unable to Reproduce | The person investigating the defect is unable to reproduce it | |

| Raised in Error | Defect was raised by mistake | |

| Other | All other defects | |

| Fixed | Ready for Build | Action has been taken or changes have been made to resolve the defect and the resulting change has been placed back into source control and is awaiting the Build Management process |

| Ready for Release | The Build Management process has been applied to the change and it is now awaiting release into the relevant Test environment | |

| Deferred | Defect is fixed but is deferred for further investigation or for a later release or requires another pending defect to be fixed and released first |

Test Closure

As a confirmation that a test phase is completed, a testing team will issue an “End of Test Closure Report”. I have typical included the in and out scope items under the test, the amount of test cases, and amount of defects found. Also in my end of test report, I have included the open defects per severity, any identified workaround, a process to transition defects as part of service operation, and a lesson learned report for potential process improvement.

Conclusions

Similar to a project management, where initiation, design, controlling, monitoring and reporting are a part of the process, whatever methodology you decide to use for application development, it is important that testing processes are introduced, implemented, managed, monitored as part of the overall governance process at the beginning of the lifecycle, rather than being introduced after development has started.

Not only does testing require meticulous preparation, but test teams are also well-placed to monitor and check the delivery of business requirements, ensure project plans are aligned, strategies, and technical documents that are essential for the timely delivery of application to any organization also quality checked. The objective is to identify requirements and any design gaps in the specification that, unless caught early on, will cause excessive changes and problems in delivering the complete functionality. It is a known fact that the earlier potential defects are found the less costly they are overall as compared to finding defects much later in the game when you factor in time, delays and contributing efforts it will take to fix such defects.

The test process is far easier if there has been adequate preparation and the necessary documentations are employed earlier than later in order to effectively deliver a testing project.

Load comments