The current era of AI and Big Data is already considered by many as the start of the fourth industrial revolution that will reshape the world in years to come. Google searches, Map navigation, voice assistants like Alexa, personalized recommendations on portals like Facebook, Netflix, Amazon, and YouTube are just a few examples where artificial intelligence is already playing an important role in our day to day lives. Perhaps people do not even realize this. In fact, a report suggests that the AI market will reach a whopping $169.41 Billion by the year 2025.

But there is a negative aspect of AI as well, which poses great privacy and social risk. The risk is associated with how some organizations are collecting and processing a vast amount of user data in their AI-based system without their knowledge or consent, which can lead to concerning social consequences.

(Source)

Is your Data Private?

Every time you go to the internet for searches, browsing websites, or when you use mobile apps, you do not even realize that you are giving away your data either explicitly or without your knowledge. And most of the time you allow these companies to collect and process your data legally since you would have clicked “I agree” button of terms and conditions of using their services.

Apart from your information that you explicitly submit to your websites like Name, Age, Emails, Contacts, Videos, or Photo uploads, you also allow them to collect your browsing behavior, clicks, likes and dislikes. Reputed companies like Google and Facebook use this data to improve their services and do not sell this to anyone. Still, there have been instances where third party companies have scrapped sensitive user data by loopholes or data breaches. In fact, the sole intention of many companies is to collect user data by luring them into using their online services, and they sell this data for vast amounts of money to third parties.

The situation has worsened with the surge in malicious mobile apps whose primary purpose is to collect even that data from the phone for which it did not seek permission. These are primarily data collection apps disguised as a game or entertainment app. In today’s world, smartphones contain very sensitive data like personal images, videos, GPS location, call history, messages, etc., and we do not even know that our data is getting stolen by these mobile apps. Every now and then, such malware apps are removed from Play Store and Apple Store but not before they have already been download millions of times.

Why are Artificial Intelligence and Data Privacy a Big Concern?

People are becoming increasingly aware that their data is not safe online. However, most of them still do not realize the gravity of the situation when AI-based systems process their social data in an unethical manner.

Let us go through some well-known incidents to understand what type of risk we are talking about.

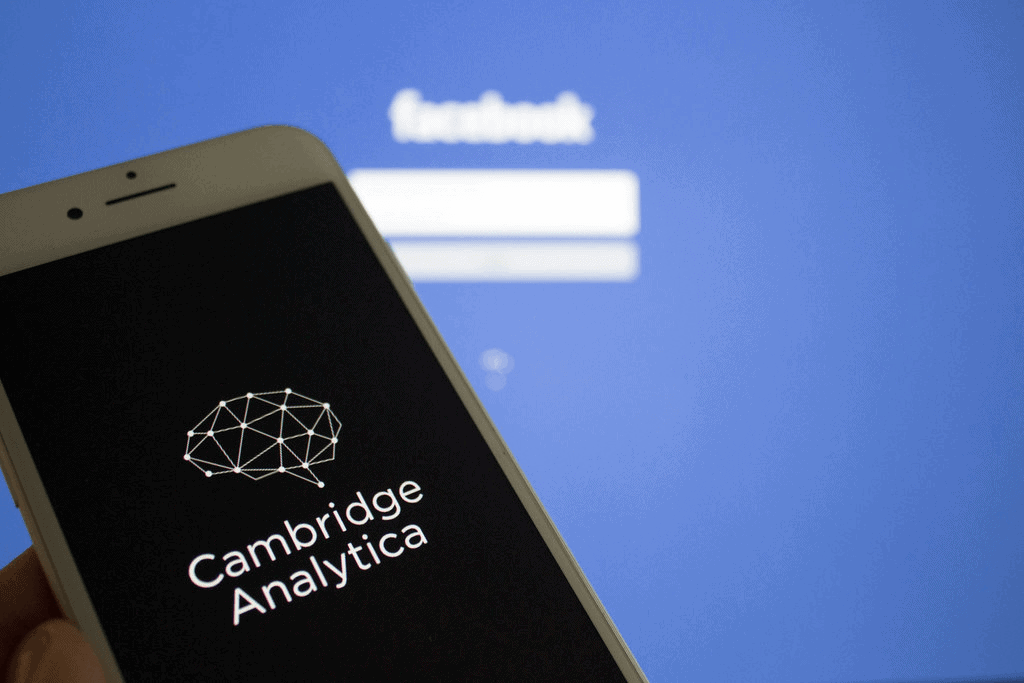

The Cambridge Analytica-Facebook Scandal

(Source)

In 2018, news broke out that a data analytics firm Cambridge Analytica had analyzed the psychological and social behavior of users through their Facebook likes and targeted them with Ad campaigns for the 2016 US Presidential election.

The issue was that FB does not sell its users’ data for such purpose. It was revealed that a developer created a FB quiz app that utilized the loophole in a FB API to collect data of users and their friends as well. He then sold it to the Cambridge Analytica firm who was accused of playing an important role in the outcome of the 2016 US Presidential elections, by unethically obtaining and mining users’ data from Facebook. And the worst thing is that users who had used the quiz app would have blindly given all permission to the app without knowing they are exposing their friends’ data also.

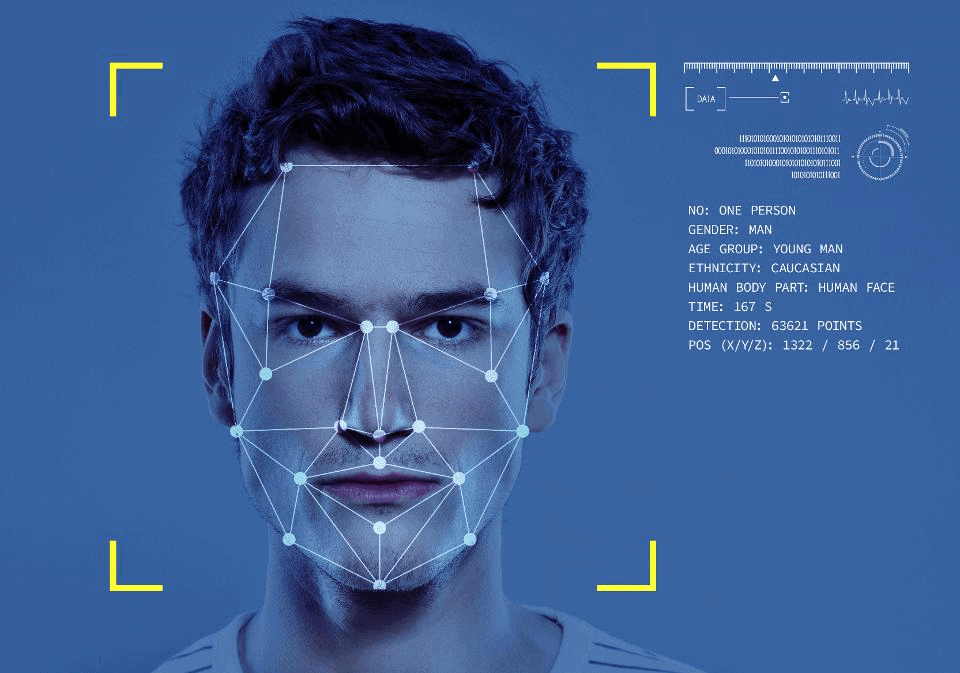

Clearview Face Recognition Scandal

(Source)

Clearview is an artificial intelligence company that created a face recognition system to help police officers identify criminals. They claim that their software has helped law enforcement agencies to track down many pedophiles, terrorists, and sex traffickers.

But in January 2020, The New York Times covered a long story about how Clearview made the mockery of data privacy by scraping around three billion photos of users from social media platforms like Facebook, YouTube, Twitter, Instagram, and Venmo to create the AI system. Its CEO, Mr. Ton-That, claimed that its system only scraped public images from these platforms. Shockingly in an interview with CNN Business, the software fetched the images from the Instagram account of the show’s producer.

Google, Facebook, YouTube, and Twitter sent cease and desist letters to prevent Clearview from scrapping photos from their platform. However, the images that you uploaded online may be included in that AI software without your knowledge. If this software gets into the hands of a rogue police officer, or if the system itself gives false positive, many innocent people might fall prey to police investigations.

DeepFakes

DeepFake puts the face of a person to another’s body (Source)

Images and videos that are created using deep learning and contain a real person acting or saying things they didn’t do or say are called Deepfakes. If used for entertainment purposes, Deepfakes are fun, but people are creating Deepfakes for fake news and information and, worse, Deepfake porn.

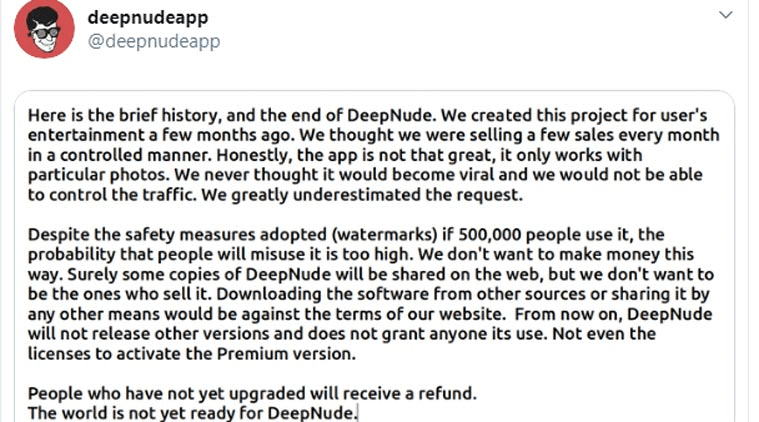

In 2019, an application called DeepNude was launched where you could upload any image of women, and it would generate a real-like nude image from it. It is quite disturbing that anyone could exploit woman images available online by creating nude images from DeepNude. After too much controversy, it was shut down. Still, it is just a matter of time that someone can again misuse Deepfake technologies by taking your publicly available videos or photos.

(Source)

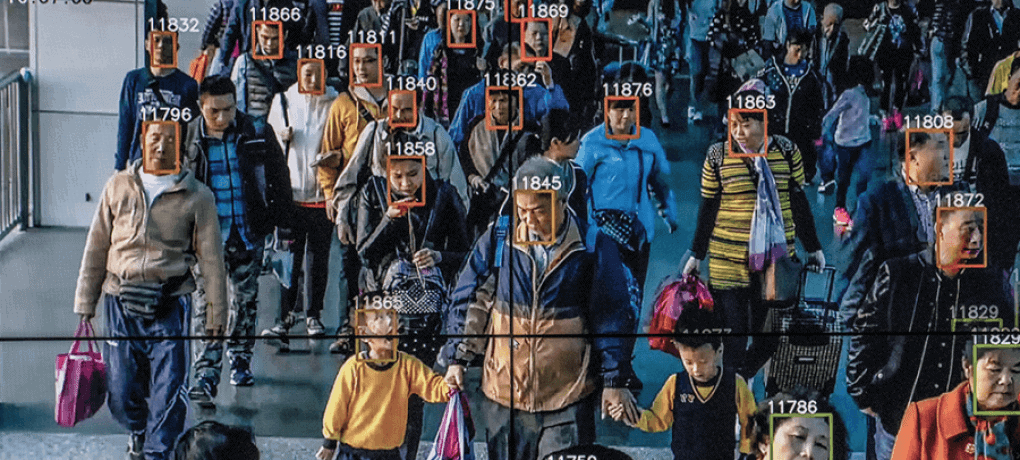

Mass Surveillance in China

(Source)

In recent years, China has received severe criticism due to mass surveillance of its people without their consent. They use over 200 million surveillance cameras and facial recognition to keep a constant watch on their people. China also mines their behavioral data captured on the cameras.

To make it worse, China implemented a social credit system to rate the trustworthiness of its citizens and give them ratings accordingly based on their surveillance. People with high credit get more benefits and low credits loose benefits. But the worse part is that all this is being determined by AI-based surveillance without people’s knowledge and consent.

How to prevent misuse of data with AI

The above case studies should have made it clear that the unethical processing of private data with artificial intelligence can lead to very dangerous social consequences. Let us see how we can play our part to stop this malpractice of private data misuse with artificial intelligence.

Government Responsibility

Now many countries have created their own data regulation policies to bring more transparency between these online platforms and the users. Most of these policies are centered around giving users more authority in what data they can share and be informed about how the platform would process their data. A very well known example of this is the GDPR law that came into existence a couple of years back for the EU countries. It gives EU people more control of their personal data and how it is processed by the companies.

Company Responsibility

Large companies like Google, Facebook, Amazon, Twitter, YouTube, Instagram, and LinkedIn literally own a majority of users’ social data across the world. Being, reputed giants, they should be extra careful not to leak any data to malicious people either intentionally or unintentionally.

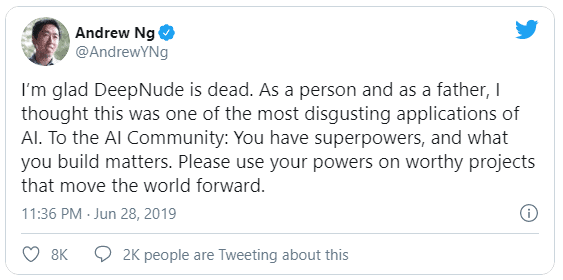

AI Community Responsibility

The people of AI communities, especially the thought leaders, should raise their voices against the unethical use of AI on the personal data of the users without their knowledge. And also they should educate the world that this practice can lead to such a disastrous social impact. Already many institutes are teaching AI ethics as a subject and also offering it as course.

User’s Responsibility

Finally, we should remember that, despite government regulations, these are just policies and the responsibility lies with us as individuals. We have to be careful about what data we are uploading on social platforms and mobile apps and always inspect what permissions we are giving them to access and process our data, let us not merely “accept” anything blindly in “terms and conditions” that comes our way on these online platforms.

Conclusion

There are many concerns around the ethics in AI within the artificial intelligence community due to the social biases and the prejudices it can create. But processing personal data with AI without people’s consent and further misusing it raises the concerns of AI ethics to the next level. AI emergence in the real world is still in nascent age, and we all have to step up to make sure that our creating AI by misusing personal information should not become a regular occurrence in the future.

Load comments