This is the sixth in a series on setting up a Data Science Laboratory server – the first is located here. My plan is to set up a system that allows me to install and test various methods to store, process and deliver data. These systems range from simple text manipulation to Relational Databases and distributed file and compute environments. Where possible, I plan to install and configure the platforms and code locally. The outline of the series so far looks like this:

- Setting up the Data Lab

- Text Systems

- Testing the Tools

- Instrumentation

- Interactive Data Tools

- Programming and Scripting Languages (This article)

- Relational Database Management Systems

- Key/Value Pair Systems

- Document Store Databases

- Graph Databases

- Object-Oriented Databases

- Distributed File and Compute Data Handling Systems

I’ll repeat a disclaimer I’ve made in the previous articles – I do this in each one because it informs how you should read the series:

This information is not an endorsement or recommendation to use any particular vendor, software or platform; it is an explanation of the factors that influenced my choices. You can choose any other platform, cloud provider or other software that you like – the only requirement is that it fits your needs. As I always say – use what works for you. You can examine the choices I’ve made here, change the decisions to fit your needs and come up with your own system. The choices here are illustrative only, and not meant to sell you on a software package or vendor.

In this article, I’ll explain my choices for languages that interact directly with data using programming and scripting languages. This is an introductory article; I’ll follow it with practical examples of how to use each in another article.

My philosophy on information technology is this: “All computing is merely re-arranging data“. Every programming language or scripting interface deals with taking data as input, operating on that data, and returning or creating some output of the data. As such, almost any language is suitable for a data professional to learn and use with the data they need to compute. However, certain languages lend themselves especially well for working with certain types or sources of data, or processing the data in certain ways. In this article, I’ll explain a few of those and the situations I find that they fit best.

One final caveat – pretty much anything can be done with a given language. If there is a language you’re familiar with, you’re probably efficient with it and you should install and use that as your “go-to” language. Since this is a “lab” system, however, you should experiment with the languages I show here in addition to others that you find interesting. Experimentation is the entire point of building this particular system.

SQL

I’ll start with the Structured Query Language, or SQL. I separate out this language since it has attributes of higher-level languages such as functions and strong data-types, but lacks richer features such as graphical interface control, complete system functions, working with objects and the like. It’s also not technically a scripting language, as it is tied to a specific “engine” for interpretation of data calls.

That last point is important – SQL is an interpreted language (like scripting) meaning that there is a complete system needed to accept, change and execute and return the commands and their output. Higher-level languages are compiled into a binary package that is executed on a given architecture.

SQL is a declarative language paradigm, which means you write statements describing what you want, without coding how that will end up happening on the system. This is a very simple way of starting to work with data, and by layering these statements you can create very powerful constructs quickly and with just a little practice. SQL is also quite easy to read, if not a bit wordy for complex programs.

In most platforms SQL statements can be sent to the server to be executed, called “dynamic” SQL, or stored on the server and called with a function, stored procedure or other call, which is sometimes called “server-side” code. There are advantages and disadvantages to both, but in large part the syntax and processes are the same regardless. If you learn one, you can usually leverage most of that knowledge on the other.

I won’t spend a great deal of time explaining SQL in this article, since the readers of this series will no doubt be quite familiar with it. If you are new to SQL, there is a series of tutorials you can follow here that is quite useful: http://www.sqlcourse.com/index.html. The statements in the SQL language fall into categories:

- Data Definition Language (DDL): Used to create, alter and delete data objects such as tables, indexes and the like

- Data Manipulation Language (DML): Used to work with data, such as inserting, altering, and deleting or selecting data

- Data Control Language (DCL): Used to control the system, such as security elements

It’s important to point out that only a couple of very abstract systems use a “pure” (ANSI) form of SQL. SQL is actually quite limited in scope, and so each vendor that provides an engine uses a “dialect” of SQL. For SQL Server, which is the focus of this series, that dialect is “Transact-SQL” or T-SQL (http://msdn.microsoft.com/en-us/library/bb510741.aspx).

As far as an installation of software to input code for SQL, I normally use the Integrated Development Environment (IDE) included with the engine that uses SQL. In SQL Server, there are several options such as SQL Server Management Studio (http://msdn.microsoft.com/en-us/library/hh213248.aspx) and SQL Server Data Tools (http://msdn.microsoft.com/en-us/data/gg427686), which I’ll cover when I describe the Relational Database Management Systems installations in another article.

I also use the Notepad++ tool (http://notepad-plus-plus.org/) I described in an earlier article, since it has syntax coloring and a few other features, but for the most part I use the included tools for their tight integration with the product. Oracle, DB/2 and other vendor products include IDE’s in their installations as well.

References:

For more general information on the SQL language, see this reference:

http://msdn.microsoft.com/en-us/library/windows/desktop/ms714670%28v=vs.85%29.aspx

For the classes I teach in Transact-SQL, I typically use books from my friend Itzik Ben-Gan:

https://www.solidq.com/books-and-publications/

The primary verbs and nouns within the T-SQL language are here:

http://msdn.microsoft.com/en-us/library/bb510741.aspx

Programming Options for Interacting with Data

As I mentioned earlier, every programming language works with data in some form. In fact, the line between scripting and programming is quite blurred – Python, which I’ll describe in a moment, fits squarely in both camps. For the most part, however, most programming languages are compiled, meaning they are re-written into a binary format that runs on a particular computing architecture without any “engine” to run them. A scripting language, on the other hand, requires the engine of the scripting program to interpret the statements and then run them. Even this distinction has problems, however. Java is obviously a programming language, but requires the Java engine to run. Ditto for the .NET stack from Microsoft.

So what are the criteria that a data professional should use for choosing a programming interface to data, then? Actually, for your lab system you shouldn’t choose at all – but use this system for its intended purpose of experimenting with everything you can. On the other hand, there is only so much time in the day, so are there clear choices for starting with a particular language? There are – and I’ve divided my criteria into the following (you may have others). The language (scripting or not) needs to have the following characteristics:

- Data-centric, or at least data-friendly, particularly as it deals with data types

- Ability to read multiple types of data sources

- Supported and popular in the data science community

- Handles large data sets

- Some level of built-in visualizations

Using those criteria, I’ll start with a brief (very brief) introduction on how programming languages interface with data and then move on to a few choices I’ve made for my system. Once again, I won’t have time for examples in this article, but I’ll provide some in the articles that follow.

This is a conceptual section of this article, so stick with me for a moment. These concepts are important for the discussions that follow. Also, because I’m covering a lot of information in just a few paragraphs, I’ll lose some fidelity and exactness in the process. This isn’t intended to be a formal programming class, so feel free to add more detail in the comments if the explanations I give here strike you as incomplete, or worse, incorrect. I’ll provide several links in the References section below if you want more precise information.

Various Programming Languages using a Data Interface

Languages work with data by connecting to or opening a data source to take in data. That can be anything from a prompt waiting at a console for user input to reading a text file or connecting to a relational database engine.

I’ll use the Microsoft .NET suite of languages to frame this conversation, since the concepts diverge quickly based on the architecture you choose. In the series I’ll create on a Linux version of the data laboratory system I’ll cover other frameworks.

In higher-level languages that use Object-Oriented programming (also called Imperative programming), such as C#, whenever you work with data you create a “Class”. A class (as it relates to data) is nothing more than a definition of some data object, such as a pizza. The definition includes a Property of the object, such as the “type of pizza“. Here’s a very simple example of a Pizza class:

|

1 2 3 4 |

public class Pizza { public string typeOfPizza {get; set;} } |

With this simple definition, you can create a new Pizza by “calling” that class name in code and passing the parameters you want to insert (the set part) or read (the get part). That call does not change the Pizza class, it’s merely used to create a brand-new pizza (called an Instance) that you can name whatever you want. In essence, you use the Pizza class as a template to create a new Pizza (perhaps called Customer123Pizza) that has the value typeOfPizza=”The Works”. Pizza is still there, and now there’s another called CustomerPizza123 as well. You can work with CustomerPizza123 and change it in other ways as well, all without bothering the original Pizza.

As a data professional, you want to work with the data in some meaningful way – usually in a tabular layout with rows and columns. But you have a Pizza object sitting there that doesn’t lend itself well to a tabular format.

There are actually a couple of ways to handle the Object-Oriented to Tabular mismatch. In the .NET framework, you can use the ADO.NET libraries (Active Data Objects) for the change. With ADO.NET, you simply create a connection to the data you want, read the data in, work with it, and optionally write it back out again. There are connection types for everything from XML to relational database management systems.

You can also use Language Integrated Query (LINQ) to query the objects using set-based logic, because LINQ works with a hierarchy of objects – in effect, it creates a network of classes. LINQ is useful because you can work not only with relational or file or text data, but any object that has a LINQ interface. You can even join up the objects if there is some dependable key value between them.

Yet another method is to use Microsoft’s Entity Framework (EF), which is a model-based interface to data. EF, along with other products that work in a similar way, allows you to create your data model, and it will generate the programming classes for you.

You can use all of these methods, as well as others I have not described here, to work with data in your experiments. If it sounds complicated, don’t worry – it really isn’t. As you experiment on your system you’ll find that each has strengths and weaknesses for any given type of data or processing need. You should become familiar with all of them in a test environment so that you know when to use each.

References:

Object Oriented Programming Tutorial:

http://www.codeproject.com/Articles/22769/Introduction-to-Object-Oriented-Programming-Concep

Working with ADO.NET:

http://msdn.microsoft.com/en-us/library/h43ks021%28v=VS.71%29.aspx

Working with LINQ:

http://msdn.microsoft.com/en-us/library/vstudio/bb397926.aspx

Working with Entity Framework:

http://msdn.microsoft.com/en-us/data/ef.aspx

Functional Programming and F#

Object-Oriented programming isn’t the only way to write code. Another major paradigm is Functional Programming. OO programming works (as the name implies) with objects, Functional Programming (also as the name implies) works with Functions. As an analogy, you can think of functions as machines that take input, work on it, and return output.

To over-simplify a bit, OO programming focuses on Objects and what you can do to them to change their state – how they currently exist, such as the type of pizza and so on. Functional programming is more concerned with what you want to do to the pizza – the transformation of the data into something else.

That simple difference means that functional programming works very well in data mining, data patterns analysis, data reduction to meaning and more. Also, because functions are less concerned with the data’s state, it lends itself very well to stateless architectures. That’s important because state is the bane of working with large sets of data. Let me explain by way of a very simple example – again, I’ll lose a bit of technical accuracy here but it’s useful to understand the concept.

Assume for a moment you’re at a store and the clerk begins to take your very large order – you’re buying lots of things for a party. The order is a bit complicated. Some things are taxed, you have coupons for other items, and so on. The object-oriented clerk would have to send out each item to another clerk to change the “state” of your order (the object) – the number, discount, and price of items. The final computation is done at the end. The state of the item is primary.

In functional programming, the clerk would take the items and pass them through computations that figure the discount, then on to the coupons, then on to the taxes and finally through the totals. The state isn’t as important, just the calculations. The other advantage here is that you don’t have to work with all of the data at once – you could have lots of clerks working with a smaller part of the data – which means you can scale to a very large set of data to work with by splitting it up.

Microsoft’s functional programming language is called F# (F-Sharp). It works with data in this way, but has two additional advantages: it can also use Object-Oriented paradigms, and has full access to all of the .NET libraries that work with everything from graphics, computations, to everything else that .NET provides.

I mention this language as one to experiment with because it doesn’t make you choose – you can write using OO concepts or Functional Programming, and even both in the same code set. It is designed from the outset to work with large sets of data, and has a wealth of documentation and training around it.

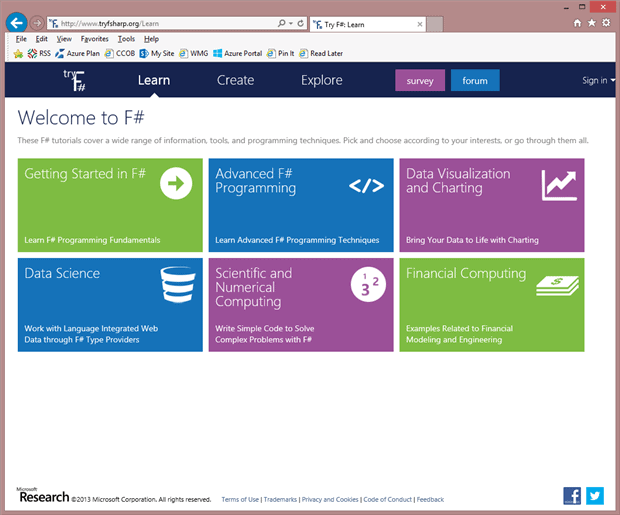

In fact, you don’t even have to install F# to try it out. If you navigate to https://glot.io/new/fsharp you can write code in a browser, take training, and even work with live datasets online.

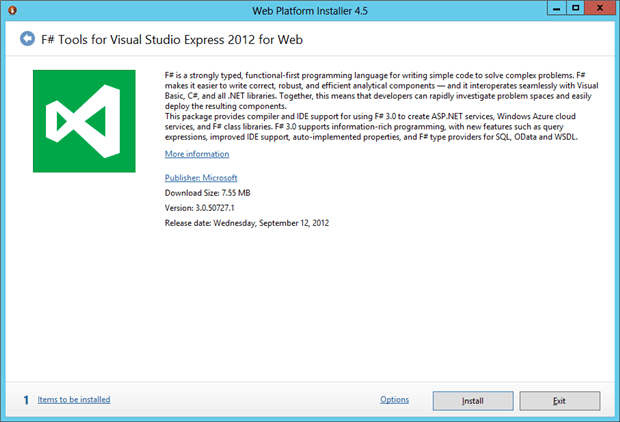

For the data professional, I recommend working through the Learn | Data Science online labs. It will quickly give you a feel for working with data in F#. From there you can download the Visual Studio Express (free) edition that contains F#: http://go.microsoft.com/fwlink/?LinkID=261287

After you install F# (a simple next, next finish process that I won’t detail here) you’ll want to locate the FSI.EXE binary on your lab system at a CMD prompt:

|

1 |

DIR C:\FSI.EXE /S |

This is the F# Interactive prompt. Running this program allows you to work with F# in an immediate mode, similar to scripting. To program with a full Integrated Development Environment, use the Visual Studio installation.

References:

Main F# Site: http://research.microsoft.com/en-us/projects/fsharp/default.aspx

Why use F# over some other language?

http://blogs.msdn.com/b/chrsmith/archive/2008/05/02/f-in-20-minutes-part-i.aspx

… and …

http://blogs.msdn.com/b/chrsmith/archive/2008/05/09/f-in-20-minutes-part-ii.aspx

Reference set for F#:

http://msdn.microsoft.com/en-us/vstudio/hh388569.aspx

Walkthrough of using F# with Open-Data providers:

http://msdn.microsoft.com/library/vstudio/hh156504.aspx

F# for Data Mining:

http://fdatamining.blogspot.com/2010/05/why-f-is-language-for-data-mining.html

Library for working with Data in F#:

https://github.com/tpetricek/FSharp.Data

Using LINQ with F#:

http://blogs.msdn.com/b/dsyme/archive/2006/01/27/fsharpmeetslinqone.aspx

Scripting (Dynamic) Languages

Scripting languages are also useful for the data professional. I’ve already covered the installation of PowerShell, the Microsoft scripting language. Like F#, one of the advantages to working with data in PowerShell is that you have the full range of the .NET libraries. I’ll show examples of that in a future article.

Python

In addition to PowerShell, several other scripting candidates exist for working with data. But one stands out for the data professional: Python. Python was predominantly used in web-based applications, where the web page served as the user interface and Python performed the computation work. As time passed, Python became a very rich scripting language due to two primary factors: it’s easy to learn, and many people wrote “libraries” or pre-defined functions that made it quick to implement to solve a problem. Those two factors also made it the go-to language for today’s data scientists. It allowed them to quickly leverage other scientist’s work in a relatively powerful simple to learn language. That means it meets the criteria of a large, supported, community install base.

Python is not only a scripting language, but fits well with the OO programming paradigm. This power, along with the fact that it runs on multiple operating systems and is an open-source project cements its choice for the data laboratory system’s toolbox.

There are two primary versions of Python – 2 and Python 3. The 2.7 and earlier versions of Python didn’t work well in certain situations, but weren’t easily changed because so many libraries referenced it. A wholesale upgrade in version 3 brought Python up to modern standards, but also caused a fairly significant problem. Because so many libraries were written to version 2 and wouldn’t work in version 3, people were reluctant to switch to the new version. Python became a victim of its own success.

Many of the popular libraries are being ported to version 3, so the way I deal with the choice of version is to ensure the libraries I want to use are available for the version I can work with. The latest version is better; but sometimes the libraries require an earlier version.

The primary libraries I add in to Python are numpy and scipy. These two libraries contain an incredible array of scientific and numeric functions. In fact, the statistics functions included in these two packages have some advantages over using the R scripting language I discussed in the last article – although I still use both.

To install Python, visit http://python.org/, click the Downloads section and select the release you want. Once you’ve completed the installation, you can install numpy and scipy here: http://www.numpy.org/.

References:

I’ve found this to be a great systematic resource for learning Python:

http://learnpythonthehardway.org/

You can also check out the tutorials here:

http://wiki.python.org/moin/BeginnersGuide

There are some interesting examples here on using Python with data:

https://mkaz.blog/code/python-data-science/

Need more data or math libraries?

http://wiki.python.org/moin/NumericAndScientific

This is an interesting commentary on using Python for data scientists:

http://datacommunitydc.org/blog/2013/03/getting-started-with-python-for-data-scientists/

This is an intriguing data visualization program for Python:

http://orange.biolab.si/

In the next installment, I’ll cover programmatic methods and tools that I’ll work with on the laboratory.

Load comments