MS SQL Server 2017 has added to its Advanced Analytics Extension, now called ‘Machine Learning Services ‘, by enabling SQL server to execute Python scripts within TSQL via ‘Machine Learning Services with Python’. This basically provides a way that the database programmer can pass data directly to and from Python. The usefulness of this is not limited to providing machine learning capabilities for data analytics, because Python has many readily-available modules and frameworks to address many problems such as performing heavy computation work with data structures, graphical processing for analysis, networking operations, database operations, web operations or local/network-based file system operations. Obviously, many of these are best done in middleware, but in database systems, there are times when it’s more convenient to have a direct communication to an external system, instead of relying on an external process to perform the task by polling the data source. This makes sense when it is not an issue to have a solution such as this within the database or data tier, and when it does not provide any security concerns.

Here, we will try to demonstrate an example of the use of Python within the Advanced Analytics Extension, showing how a database can trigger an external process to perform an activity on the data that is provided as a parameter. This is designed to take into account issues of security, data reliability and transaction response time.

Use-cases for Python

Some tasks can be done much more easily by calling a Python script from SQL rather than relying on middleware. This is particularly the case where the task is initiated by an event within the database. The task might include

- Sending data to, or receiving it from, network-based systems over TCP/HTTP/SOAP.

- Making use of local platform resources such as the file system, networking or GPU.

- Building real-time integration between one or more systems by using a universal data format such as JSON, XML or YAML.

- Generating data or files by communication with external applications.

Naturally, there are few potential disadvantages

- If your usage of Python requires internet access, there is a risk that data that must be kept secure may be accidently shared over the internet. Any internet access must be carefully regulated by the network.

- A security risk is exposed by allowing execution of Python scripts on the server via ‘external script execution enabled’.

- Resource-intensive Python scripts on the same server can affect the performance of ongoing transactions on large OLTP systems.

Weighing up these advantages and disadvantages, there still seem to be occasions when Python can play a useful role if it can minimize the risks. As an example of this, let’s consider how we can use Python to build a Data cache system for use by the application tier.

Example Solution for Cache

Caching of data can be an effective way of improving application performance. At the cost of storage overhead for the cache, we can make useful performance gains when faced with such things as chatty network communications with the database, and high resource consumption by the database when faced with repetitive queries. When we build a cache infrastructure, we are faced with the common problem of when to refresh the contents of the cache. We tend to adopt the simple solution of rebuilding cache after a certain time interval. However, this is extraordinarily inefficient. It is much better to refresh the cache when the data changes, and refresh only what is changed. We can do this close to real-time, at the point when the data is created, updated or deleted. There are many tools and frameworks available to solve the problem of refresh, but they suffer from the problem of how to determine what has changed in the data and when the changes are committed. The database is best placed of all to be able to do this.

For our caching system, which is available in source here, we will restrict ourselves to the Microsoft stack for everything barring Python itself.

- Microsoft SQL Server 2017(CPT)

- Service Broker to isolate transactional database.

- Python to execute script which can update cached over HTTP (Python 3.5 executable with libraries from the Anaconda distribution)

- .Net 4.5.2

- ASP.Net MVC for our example Web UI

- ASP.Net WebAPI encapsulating cache store for our example solution.

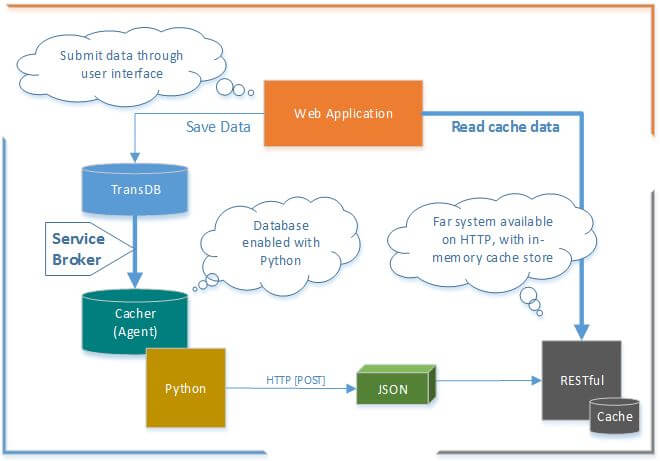

Here is the pictorial representation of our example solution cache system:

- The WebApplication provides a user interface to read and update the data.

- The RESTful.Cache application in our example cache store solution is built with ASP.Net WebAPI2, its content type is JSON. The HTTP-GET operation delivers data from local cache (a static collection).

- MS SQL Server 2017 (CPT) is a database server with a

- TransDB OLTP database, busy processing transactions.

- Cacher an agent database to perform Python script execution, script execution enabled with ‘external scripts enabled’ option turned ON. Refer to the Microsoft.Doc: external scripts enabled Server Configuration Option.

- Service Broker, a reliable messaging framework of SQL server, aids to bridge Cacher-Agent and TransDB. Received messages by Cacher-Agent can be processed to update the cache.

- Python is the integrated scripting language for database system with SQL 2017(CPT).

The architecture of the solution

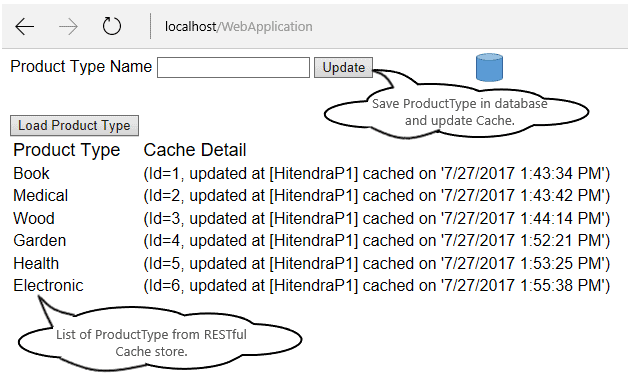

In our solution we will cache the entity ‘Product Type names’ in a RESTful.Cache application and the WebApplication will have a function to create new Product Type entries and read from the RESTful.Cache.

Prerequisite

As an aside, there are some prerequisites and some more information we need to consider.

- The SQL Instance where CacheDB is hosted must have ‘Machine Learning Services with Python’ installed

- To execute a Python script with TSQL in CacheDB, SQL Service MSSQLLaunchpad or SQL Server Launchpad should be running. Refer to Microsoft.Net: Microsoft Machine Learning Services

- Enable external script execution with SP_Configure, refer to Microsoft.Doc: external scripts enabled Server Configuration Option

12sp_configure 'external scripts enabled', 1;RECONFIGURE; - The TransDB and Cacher hosted environment should have a Service Broker endpoint created on its instance, if these are hosted independently on two different SQL instances then each instance should have its own endpoint.

- The TransDB and Cacher databases should have Broker enabled. Refer to Microsoft.Technet: How to: Activate Service Broker Message Delivery in Databases.

-

1234ALTER DATABASE TransDB SET ENABLE_BROKER;GOALTER DATABASE CacheDB SET ENABLE_BROKER;GO

.NET Applications

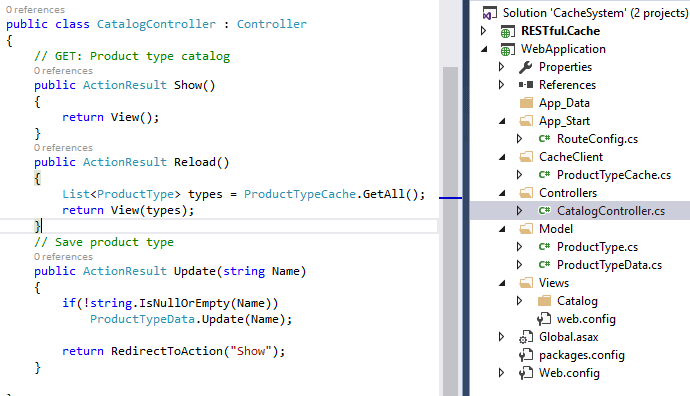

The WebApplication has two main MVC actions; one to update a new entity in TransDB with HTTP verb POST and another action to return list of Product Type from cache with HTTP verb GET.

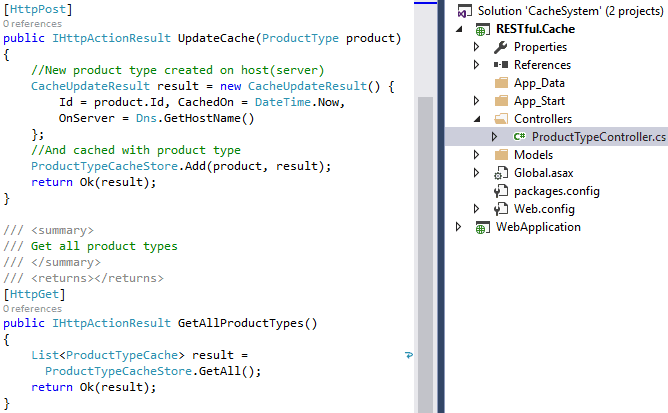

RESTful.Cache has two action methods, one to update cache with the newly-added entity Product Type with HTTP verb POST, and another to get all the cached Product Type from local cache.

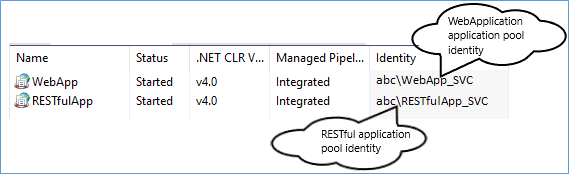

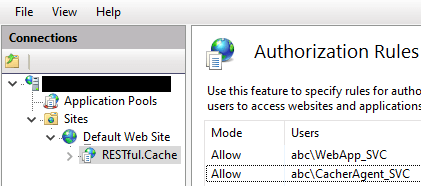

For our example solution, both applications are hosted in IIS under individual application pool identities to keep applications secured. But for the actual system implementation, the hosting environment can be an individual webserver in either an intranet or internet environment.

The RESTful.Cache authorization rule has only two service accounts to process HTTP request i.e.

abc\WebApp_SVC and abc\CacherAgent_SVC. The abc\CacherAgent_SVC service account allows Python script in SQL to reach the application over HTTP to refresh the cache.

The abc\WebApp_SVC user is for the WebApplication having authorization rule mode to allow the accessing of the RESTful.Cache application.

SQL Database and Service Broker

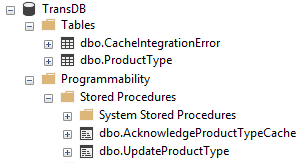

The OLTP database TransDB has a few objects, including tables, stored procedures and Service Broker objects.

For our purposes, the procedure UpdateProductType updates the ProductType table with a new record and the AcknowledgeProductTypeCache procedure is the activation procedure for the CacheIntegration queue, it receives acknowledgement from the target when a message is being processed i.e. from the Cacher database. It also handles exceptions if any, and logs these in the CacheIntegrationError table.

More information on Service Broker can be found at Microsoft.DOC: SQL Server Service Broker

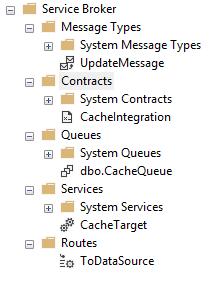

For our example solution, TransDB is a source database which creates update cache message when a new ProductType record is created, a message to perform an action, for that it has UpdateMessage message type, a CacheIntegration contract to send a message with CacheSource service to target the database. The Service has a CacheQueue which is used by the Service Broker component to perform reliable message delivery. The ToCacheTarget route has the information to deliver the message to its target.

To eliminate any chance of increasing the transaction processing time as well as to avoid any security risk on the rest of the data in the transactional database, we will decouple the cache update process by using an agent database, called Cacher database in our example solution. The Service Broker messaging infrastructure will help to connect TransDB and Cacher databases and, event-based message processing will enable us to update the cache storage residing on the network-based system. The Cacher database is playing the role of an agent in order to perform a cache refresh when the update message arrives. It updates cache by executing a Python script..

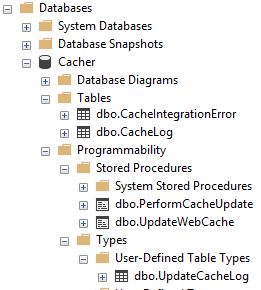

The Cacher database has:

- CacheLog and CacheIntegrationError tables, to keep track of when cache has been refreshed and has records of any errors that may occur during the cache refresh process.

- The PerformCacheUpdate procedure receives the incoming message from TransDB via Service Broker. If the type of the message is UpdateMessage then it executes another procedure, UpdateWebCache, which performs the Python script execution.

- The UpdateWebCache procedure’s execution result is held in a table variable that then gets inserted in the CacheLog table at the end of the message conversation.

- The Procedure also ends the conversation when the received message has either the Error or End message type and, on error type, the exception log is written in the CacheIntegrationError table.

- The UpdateWebCache procedure extracts the Id and Name from the incoming XML message passed as parameter and embeds these values in the Python script text. The script execution result set is a structured table of the type UpddateCacheLog.

The Cacher’s Service Broker objects, mainly UpdateMessage message type and CacheIntegration contract are the same as those of TransDB, CacheQueue has an activation procedure called PerfomCacheUpdate, a service called CacheTarget, and the route has information about TransDB’s service CacheService and the endpoint address.

For our example solution, the maximum queue reader is set to 1 for both queues of databases. This can be increased if required to, for example if data modification is high and you need to increase the cache refresh-rate.

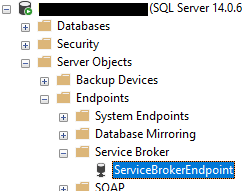

Service Broker Endpoint

For our solution, the databases are hosted on same instance, so both are using same Service Broker Endpoint in order to send and receive messages.

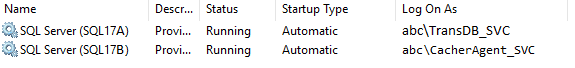

But if we want to host databases on individual instance then each SQL instance’s service account should have a Service Broker endpoint. And both SQL instances should have permission to allow for the sending of messages to each other’s endpoint. The authorization and granting of the connection can be done with the following set of TSQL commands. Note that in messaging infrastructure there is one sender and other side is receiver, as mentioned if SQL instances are part of sender and receiver then each instance should have its own process identity. The picture below is a representation of how each SQL Server is running under its own identity.

This is the SQL code for authorizing and granting endpoint connect to TransDB’s SQL instance service account [identity] in SQL instance of Cacher database.

|

1 2 3 4 |

ALTER AUTHORIZATION ON ENDPOINT::ServiceBrokerEndpoint TO [abc\TransDB_SVC] GO GRANT CONNECT ON ENDPOINT::ServiceBrokerEndpoint TO [abc\TransDB_SVC] GO |

Similarly, here is the code for authorizing and granting endpoint connect to Cacher’s SQL instance service account [identity] in SQL instance of TransDB database.

|

1 2 3 4 |

ALTER AUTHORIZATION ON ENDPOINT::ServiceBrokerEndpoint TO [abc\CacherAgent_SVC] GO GRANT CONNECT ON ENDPOINT::ServiceBrokerEndpoint TO [abc\CacherAgent_SVC] GO |

Python Script

Here is the Python script text, saved as a string in the TSQL variable @UpdateCache. It has the UpdateCache method with logic to perform an HTTP POST call to RESTful.Cache by passing a data object with Name and Id fields, which are received as an input parameter. It Receives a JSON object and returns it as output result of the method to the caller.

At the end of the script, the returned object is transformed into an array, so it can be structured as a SQL result.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

DECLARE @UpdateCache NVARCHAR(MAX) = N' import pandas as PND #data structure package def UpdateCache(name,id): import requests as HTTP #http request package #Perfom HTTP POST to update cache httpRequest = HTTP.post( http://localhost/RESTful.Cache/ProductType/UpdateCache ,{ Name :name, Id :id}) cacheLog = httpRequest.json() return cacheLog #Update cache and build log element log = [UpdateCache( '+ @Name+' ,'+ CAST(@Id as VARCHAR(10)) +')] #Return data frame i.e. table structure from SQL OutputDataSet = PND.DataFrame(data=log) '; |

There are a few things worth noting while working with a Python script in SQL server.

- We can write a continuous script or group them into methods as we have done in this solution. Alternatively, we can create an inline class or create a package and import them in python at the command prompt with a PIP command.

- In this CPT version of MS SQL, import statement able to import packages only at scope where it is placed, hence we can notice that import requests import statement exists inside method UpdateCache and, import statement import pandas exists at top of the script for one line at the end of the script.

- The output object of method UpdateCache is immediately transformed as an array, this way pandas.DataFrame can transform an object into a data structure which SQL server can easily interpret as a table with row and columns.

- The Data structure assigned to the OutputDataSet object is made available in the TSQL execution context by SQL server.

- The last line of procedure dbo.UpdateWebCache, WITH RESULT SETS (AS TYPE dbo.UpdateCacheLog); has a user-defined table type dbo.UpdateCacheLog which helps to keep the underlying columns in order and avoid any mismatch during the process of generating the result set from the received data structure. Another way is to build a mapped column structure within Python and in the result set.

Database Security

TransDB is an OLTP database and we do not want any security breach for any kind of attack on the system, hence with our example solution approach, such database can be hosted on a SQL instance where ‘Machine Learning Services’ is not installed. Cacher is an agent that is able to reach a network-based system, so can stay on the SQL instance where Machine Learning Services is installed. Both SQL instances can have an individual service account identity, which has been authorized to connect to Service Broker endpoints for a specific port only. The other way to securely authenticate communication is by using certificates. For Service Broker endpoints authorization refer to Microsoft.Technet: How to: Allow Service Broker Network Access by Using Certificates (Transact-SQL) for more details.

All components put together

After placing all the components in place, here is our WebApplication that allows us to create a new ProductType and list the same product type from refreshed cache with a RESTful HTTP call. Here behind the wall there are components which are managing data and cache is invisible to the frontend application.

Conclusion

Applications such as e-commerce, healthcare an e-governance can benefit from a good caching implementation. By extending the use of technologies that we are familiar with, we can get a solution that is easy to maintain without the cost of learning a new framework or features.

Our example solution meets what we need because

- When Data is created or modified by one of the OLTP transactions, the system refreshes the network-based cache system for read access.

- It is capable of using asynchronous events to refresh the cache, near to real-time. This will not affect the performance of the original transaction.

- It can draw a security line between the transactional and cache system over HTTP so as to keep data secured in the OLTP database.

- It is enabled with a minimal monitoring feature; a cache log and exception log, which can be enhanced further to build an admin console.

- With the Service Broker messaging component, the solution is flexible enough to trigger or reach the network-based system when asynchronous message processing is happening. In other words, databases are integrated with SQL Service Broker messaging and based on received data, an action is performed to get or send data to external systems which are outside of data tier.

- Isolating, external system triggering events inside dedicated database by using service broker messaging helps to secure transactions and data of OLTP database.

The source code for this project is available here in githib. https://github.com/hi10p/SQL17Python

Load comments