Running Linux SQL Server as a Container

Phil Factor starts a series of articles that will demonstrate the use of temporary SQL Server instances, running in Linux containers, into which we can deploy the latest database build, stocked with data, for development and testing work. This initial article shows how to set up a SQL Server instance inside a Linux Docker container, create some sample databases, and persist data locally.

Running Linux SQL Server as a container in a Windows Virtual Machine is valuable for development work. It saves time in setting up development and test environments. It provides a standard environment for running databases. There are limitations, however. For example, Windows authentication isn’t supported, currently. There are also configuration and operational issues that you’ll need to consider and understand, before running a ‘stateful’ application like SQL Server in a container, in production. Otherwise, you can run into problems. For running SQL Server in a Linux container, on Windows, things are changing quickly; check that Microsoft currently supports the type of use you are planning.

While it’s possible to create containers that have cohabiting applications and an installed working SQL Server instance, it’s currently only for pioneers. This may soon change because this technology is developing rapidly, but most teams using containerized SQL Server in Windows are concentrating on those many aspects of test and development where containers are already useful and familiar tools. For example, there is an obvious requirement to provide temporary SQL Server instances into which a database can be built, mounted or restored, and then run together with the application, for testing.

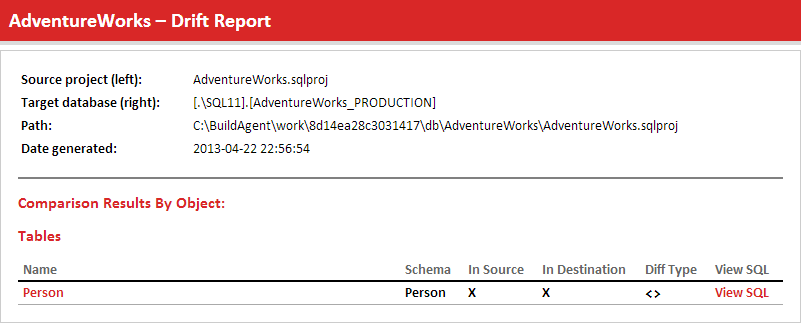

In this series of articles, I’ll be going through the principles, providing sample code, and showing how this is easy to achieve, and automate, with the help of Redgate tools such as SQL Change Automation and SQL Compare.

To get up and running…

You will need access to a machine running Windows 10 and must install Docker Desktop For Windows. It is a good idea to set the Docker Desktop Service to start automatically but with a delay, because this makes it easier for Docker to manage the auto-start of containers, after a restart.

Docker Desktop for Windows provides extensions for the command line or PowerShell that allow us to manipulate, build, ship, and run ‘dockerized’ applications. It uses Windows-native Hyper-V virtualization and networking and will install this if it is not already there. It will run Linux or Windows images. PowerShell is not a requirement but is a useful way of scripting container activity. You can use the command-line just as easily. Just to gain confidence in Docker, it pays to install and run a few simple containers as suggested in the documentation.

Install a SQL Server image into a Docker container

A Docker image is just a file, comprised of several layers, that is used to execute code in a Docker container. It is built from the instructions for a complete and executable version of an application, which relies on the host OS kernel. The major databases are published as images on Docker Hub (See Verified and Official Database Images on Docker Hub).

The first step is to ‘pull’, or download, the official Microsoft SQL Server ‘image’ from Docker. We need to execute this, and all subsequent code, on the Windows machine that will host the container’s VM. This can be anything from your laptop upwards.

|

1 2 |

#Pull, download the image you need docker pull mcr.microsoft.com/mssql/server |

Listing 1

This code will download the image for the latest production version of Linux SQL Server. You can try downloading other SQL Server images simply by adding a tag to the name of the image. For the time being we’ll keep ambition in check.

By obtaining the image, you will have added it to the collection of images you hold locally. This means that Docker will not have to explicitly ‘pull’ the image the first time you create a container from it. if you specify an image in the docker run command without having done so, it will produce an error, but then go on to pull the image and add it to your collection. If you want an updated image, then pull it before you run the container.

We will execute the code below once we have fine-tuned it to our requirements. The -p, or –publish option is used to explicitly map a single port, or range of TCP ports, on the host server (first value) with a TCP port in the container (second value). If you want to run a new instance of SQL Server on a PC that doesn’t already have SQL Server installed, then leave the -p1433:1433, otherwise you will need to change the first port for an unused port on your host machine. Each container will need a separate port.

Now change MyPassword for a real password and each of the name value for the name of the container you want. If you leave out the name, Docker obligingly chooses one for you. The type of product you want is specified in the MSSQL_PID environment variable. I’ve specified the free version, Express, in the example code.

The -h option changes the internal hostname of the container to whatever you specify, rather than a container_id. It isn’t essential but it makes it easier for automated SQL or SMO processes to double-check that they’re in the right place.

|

1 2 3 4 |

docker run -e "ACCEPT_EULA=Y" -e "SA_PASSWORD=MyPassword" ` -e 'TZ=Europe/London' -e 'MSSQL_PID=Express' -p1433:1433 --name MyName ` --restart unless-stopped ` -h MyName -d mcr.microsoft.com/mssql/server |

Listing 2

You may also want to specify the language or specify that SQL Agent is enabled and so on. These and other options are specified through the Linux Environment variables here: Configure SQL Server settings with environment variables on Linux.

If you don’t specify the -h option, then there are easy ways of getting the container_id, if you need it, such as:

|

1 |

$ContainerID=(docker ps --filter "name=MyName" --format "{{.ID}}") |

Once SQL Server has finished initializing, you should have an instance of the latest released version of SQL Server running inside your container. However, beware of those errors where SQL Server will refuse to start. If, for example, your SQL Server password isn’t complex enough, it will tell you in the log and then sulk. If things go wrong, you must inspect the SQL Server logs by using the docker logs <name of container> command.

Here is a way of checking for the word ‘Error ‘, routinely

|

1 |

docker logs adventureworks| where {$_ -like '*Error *'} |

Once everything is working, you should be able to access your containerized instance as normal, via SSMS. If you are using the default port, as in the example above, you just specify the computer. If this is not the first instance, you’ll have changed the first port number in the -p command and will need to use the name of the computer followed by the port, separated by a comma (e.g. LocalHost,1436 or MyFancyServer,1567). If you are doing this on a server on the network, you should be able to access it so long as the port you specified is allowed by the server’s firewall.

Create some sample databases

You can put some sample databases into your containers by copying the files, in this case backup files, using the cp command. This will copy them from your filesystem to the filesystem of the container. I have taken them from a local backup directory.

|

1 2 |

docker cp C:\ Backup\WideWorldImporters-Full.bak Myname:/var/opt/mssql/data docker cp C:\ Backup\AdventureWorks2016.bak Myname:/var/opt/mssql/data |

Listing 3

Once the files are on the container’s filesystem, they will appear as backup devices in SSMS that you can restore into the instance.

All the changes that you make to your SQL Server configuration, as well as the databases you create or restore into the container, are persisted in the container until you remove the container.

Persisting the container data locally

Docker containers could, originally, only support stateless applications, but for some time now have provided permanent storage for containerized applications. Because of this, Linux containers are already a familiar way of provisioning full-stack software applications that include databases such as MySQL and PostgreSQL.

Microsoft have now added Linux SQL Server 2017 and 2019 to that choice. In a Docker container, all SQL Server configuration changes and user database files are persisted in the container, even if you restart the container with docker stop and docker start.

Beware, however, of removing the container with docker rm, because everything in the container is then deleted, including SQL Server and the user databases. To get around this problem, we need to persist the data locally. You can do this simply by using use cp to copy files in the reverse direction, from the filesystem of the container to your local filesystem. This keeps things simple and can avoid any of the potential terrors of the file permission errors that can occasionally crop up, with files inhabiting the strange netherworld within a container on a Linux VM hosted by a Windows machine.

The other way to persist data locally is to use the –volume (-v) option, with the docker run command, so that a local directory on the host file system, or a named volume, is then used for the database files. This allows us to share file directories between the container and the host. You can share or view these files outside of the docker container. They are permanent even if the container is removed.

Mapping to a local directory

SQL Server in a container puts its data in var/opt/msql, so we just need to map this directory using the -v option, specifying an absolute path for a host directory for the value preceding the colon. Docker bind-mounts to the path you specify. After the colon is the container path, which must always be an absolute path, such as /var/opt/mssql, in our case.

Here, we’re just mounting a separate local directory for each SQL Server container that we set up. Docker will ask you to share the drive, and you’ll need to supply your admin password.

|

1 2 3 4 |

docker run -e "ACCEPT_EULA=Y" -e "SA_PASSWORD=MyPassword" ` -e 'TZ=Europe/London' -p1434:1433 --name MyName ` -v 'C:\Config\MyName:/var/opt/mssql' --restart unless-stopped ` -h MyName -d mcr.microsoft.com/mssql/server |

Listing 4

There was a time that the host path had to have an initial forward slash, and each back-slash had to be translated to forward slash, but the recent Docker code is much more tolerant. As always, beware of case sensitivity; if you’re not used to it, it can trip you up.

As soon as the container is running, you should see subdirectories being created for SQL Servers files, the most important one being the data directory with the database files in it. Backups appear in the root of this directory. Copying files into a local directory like this will make it much easier to perform BCP operations and the like, when we’ve got a lot of files.

Using a named volume

Let’s say we want to try out a different version of SQL Server, such as SQL Server 2019. This is a good use of a container. I found that the use of a volume was much more reliable than using an absolute path when working with the SQL Server 2019 images, so we chose that in this article, but the use of the absolute path means that you don’t need the Docker cp command to copy files in or out of the container’s file system.

Once again, change 'MyPassword' for a real password and give the container a name. As we are adding a second instance, we’ve specified a different port on the host but kept a standard SQL Server port on the container. This time, we’re using the -v command to provide the name of a volume.

|

1 2 3 4 |

docker run -e "ACCEPT_EULA=Y" -e "SA_PASSWORD=MyPassword" ` -e 'TZ=Europe/London' -e "MSSQL_PID=Developer" -p1437:1433 --name MyName ` -v 'MyName:/var/opt/mssql' --restart unless-stopped ` -h MyName -d mcr.microsoft.com/mssql/server:2019-CTP2.4-ubuntu |

Listing 5

However, now you’ll still need to use the cp command to persist data locally.

|

1 |

docker cp MyName:/var/opt/mssql/data C:\Config\Myname |

Listing 6

You can share local volumes between containers, but this only has a specialized use where you need to share data between containers.

Using the Linux Bash Shell to communicate with the container

We’ve’ mapped the port of the container with the port of the host computer, so everything based on TDS, such as SSMS or ODBC, will work fine, and your various SQL Server tools will work as expected.

However, you can, if you need, execute the Linux version of the SQL tools such as sqlcmd. This allows you to make queries directly in the container.

|

1 2 3 |

docker exec -it MyName /opt/mssql-tools/bin/sqlcmd ` -S localhost -U SA -P "MyPassword" ` -Q "ALTER LOGIN SA WITH PASSWORD='''MyBetterPassword'" |

Listing 7

You can alternatively execute the commands via an interactive BASH shell. It is also possible to run BASH scripts.

Using Containers for Database Development

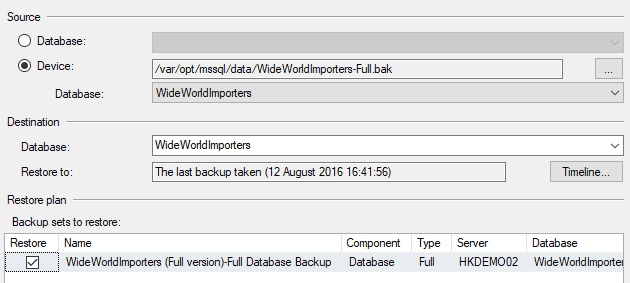

If you need your own copy of the development database to work on, you can run an instance in a container and then copy a backup file of the development database to the shared volume that you specified in the docker run command. This will appear as a backup device in the SSMS ‘restore’ dialog when you add a backup device. You then restore that file and it will appear as a user database in your container. The whole operation can be scripted in PowerShell.

The use of SQL to do the restore is, in my experience rather more successful than using SMO either directly in scripting or indirectly via SSMS.

When you have finished, you can remove the container and the database will persist in the shared directory. It will need to be reattached every time the container is re-created by the docker run command.

Once a containerized SQL Server instance is created and can be reached remotely, then it is simple to keep it up to date with the current build via PowerShell. We’ll be showing ways of doing this in later articles in this series.

Conclusions

There are now a remarkable number of different ways to develop SQL Server databases. One can use the traditional shared development database, or adopt instead the ‘dedicated’ model, with SQL Server instances on each developer machine.

You can provide Azure SQL Database, or network-based SQL Server in a VM. You can use SQL Clone to provision all the copies of the development databases that you could possibly want. Now you also have the alternative of containerization of SQL Server. Which of these ways of serving up a development database should you choose?

I think that this is a trick question. When I am developing databases in a team, I’m likely to use a sandbox server to try out ideas and experiment, even a disposable copy of the database stocked with test data. Alongside this I’ll probably also work on a shared server that represents the trunk.

Every new technology just allows me to work faster if I can find an appropriate use for it. To use an artistic analogy, the introduction of a new paint such as Chromium Green Oxide doesn’t mean that you ditch the previous palette, it just increases the range of possibilities. So it is with database containerization.