Artificial intelligence (AI) is the ability of machines to replicate or enhance human intellect, such as reasoning and learning from experience. Artificial intelligence has been used in computer programs for years, but it is now applied to many other products and services. For example, some digital cameras can determine what objects are present in an image using artificial intelligence software. In addition, experts predict many more innovative uses for artificial intelligence in the future, including smart electric grids.

AI uses techniques from probability theory, economics, and algorithm design to solve practical problems. In addition, the AI field draws upon computer science, mathematics, psychology, and linguistics. Computer science provides tools for designing and building algorithms, while mathematics offers tools for modeling and solving the resulting optimization problems.

Although the concept of AI has been around since the 19th century, when Alan Turing first proposed an “imitation game” to assess machine intelligence, it only became feasible to achieve in recent decades due to the increased availability of computing power and data to train AI systems.

To understand the idea behind AI, you should think about what distinguishes human intelligence from that of other creatures – our ability to learn from experiences and apply these lessons to new situations. We can do this because of our advanced brainpower; we have more neurons than any animal species.

Today’s computers don’t match the human biological neural network – not even close. But they have one significant advantage over us: their ability to analyze vast amounts of data and experiences much faster than humans could ever hope.

AI lets you focus on the most critical tasks and make better decisions based on acquired data related to a use case. It can be used for complex tasks, such as predicting maintenance requirements, detecting credit card fraud, and finding the best route for a delivery truck. In other words, AI can automate many business processes leaving you to concentrate on your core business.

Research in the field is concerned with producing machines to automate tasks requiring intelligent behavior. Examples include control, planning and scheduling, the ability to answer diagnostic and consumer questions, handwriting, natural language processing and perception, speech recognition, and the ability to move and manipulate objects.

History of AI and how it has progressed over the years

With so much attention on modern artificial intelligence, it is easy to forget that the field is not brand new. AI has had a number of different periods, distinguished by whether the focus was on proving logical theorems or trying to mimic human thought via neurology.

Artificial intelligence dates back to the late 1940s when computer pioneers like Alan Turing and John von Neumann first started examining how machines could “think.” However, a significant milestone in AI occurred in 1956 when researchers proved that a machine could solve any problem if it were allowed to use an unlimited amount of memory. The result was a program called the General Problem Solver (GPS).

Over the next two decades, research efforts focused on applying artificial intelligence to real-world problems. This development led to expert systems, which allow machines to learn from experience and make predictions based on gathered data. Expert systems aren’t as complex as human brains, but they can be trained to identify patterns and make decisions based on that data. They’re commonly used in medicine and manufacturing today.

A second major milestone came in 1965 with the development of programs like Shakey the robot and ELIZA, which automated simple conversations between humans and machines. These early programs paved the way for more advanced speech recognition technology, eventually leading to Siri and Alexa.

The initial surge of excitement around artificial intelligence lasted about ten years. It led to significant advances in programming language design, theorem proving, and robotics. But it also provoked a backlash against over-hyped claims that had been made for the field, and funding was cut back sharply around 1974.

After a decade without much progress, interest revived in the late 1980s. This revival was primarily driven by reports that machines were becoming better than humans at “narrow” tasks like playing checkers or chess and advances in computer vision and speech recognition. This time, the emphasis was on building systems that could understand and learn from real-world data with less human intervention.

These developments continued slowly until 1992, when interest began to increase again. First, technological advances in computing power and information storage helped boost interest in research on artificial intelligence. Then, in the mid-1990s, another major boom was driven by considerable advances in computer hardware that had taken place since the early 1980s. The result has been dramatic improvements in performance on several significant benchmark problems, such as image recognition, where machines are now almost as good as humans at some tasks.

The early years of the 21st century were a period of significant progress in artificial intelligence. The first major advance was the development of the self-learning neural network. By 2001, its performance had already surpassed human beings in many specific areas, such as object classification and machine translation. Over the next few years, researchers improved its performance across a range of tasks, thanks to improvements in the underlying technologies.

The second significant advancement in this period was the development of generative model-based reinforcement learning algorithms. Generative models can generate novel examples from a given class, which helps learn complex behaviors from very little data. For example, they can be used to learn how to control a car from only 20 minutes of driving experience.

In addition to these two advances, there have been several other significant developments in AI over the past decade. There has been an increasing emphasis on using deep neural networks for computer vision tasks, such as object recognition and scene understanding. There has also been an increased focus on using machine learning tools for natural language processing tasks such as information extraction and question answering. Finally, there has been a growing interest in using these same tools for speech recognition tasks like automatic speech recognition (ASR) and speaker identification (SID).

Different fields under AI to clear common misconceptions

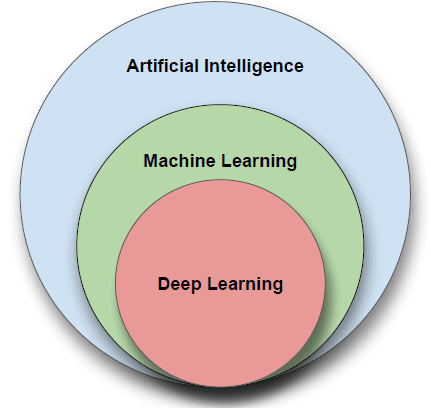

Artificial Intelligence is the most trending field of computer science. However, with all the new technology and research, it’s growing so fast that it can be confusing to understand what is what. Furthermore, there are many different fields within AI, each one having its specific algorithms. Therefore, it’s essential to know that AI is not a single field but a combination of various fields.

Artificial Intelligence (AI) is the general term for being able to make computers do things that require intelligence if done by humans. AI can be broken down into two major fields, Machine Learning (ML) and Neural Networks (NN). Both are subfields under Artificial Intelligence, and each one has its methods and algorithms to help solve problems.

Machine learning

Machine Learning (ML) makes computers learn from data and experience to improve their performance on some tasks or decision-making processes. ML uses statistics and probability theory for this purpose. Machine learning uses algorithms to parse data, learn from it, and make determinations without explicit programming. Machine learning algorithms are often categorized as supervised or unsupervised. Supervised algorithms can apply what has been learned in the past to new data sets; unsupervised algorithms can draw inferences from datasets. Machine learning algorithms are designed to strive to establish linear and non-linear relationships in a given set of data. This feat is achieved by statistical methods used to train the algorithm to classify or predict from a dataset.

Deep learning

Deep learning is a subset of machine learning that uses multi-layered artificial neural networks to deliver state-of-the-art accuracy in object detection, speech recognition and language translation. Deep learning is a crucial technology behind driverless cars and enables the machine analysis of large amounts of complex data — for example, recognizing the faces of people who appear in an image or video.

Neural networks

Neural networks are inspired by biological neurons in the human brain and are composed of layers of connected nodes called “neurons” that contain mathematical functions to process incoming data and predict an output value. Artificial neural network learns by example, similarly to how humans learn from our parents, teachers, and peers. They consist of at least three layers: an input layer, hidden layers, and an output layer. Each layer contains nodes (also known as neurons) which have weighted inputs that compute the output.

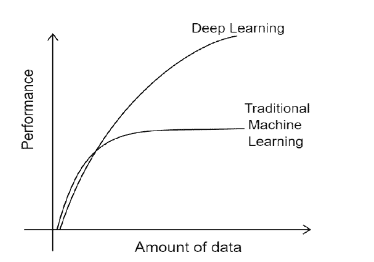

The performance of traditional machine learning models plateau and throwing any more data doesn’t help improve the performance. Deep learning models continue to improve in performance with more data.

These fields have different algorithms, depending on the use case. For example, we have decision trees, random forests, boosting, support vector machines (SVM), k-nearest neighbors (kNN), and others for machine learning. For neural networks, we have convolutional neural networks (CNNs), recurrent neural networks (RNNs), long short-term memory networks (LSTMs), and more.

However, classifying AI according to its strength and capabilities would mean further subdividing it into “narrow AI” and “general AI.” Narrow AI is about getting machines to do one task really well, like image recognition or playing chess. General AI means devices that can do everything humans can do and more. Today’s research focuses on narrow AI, but many researchers would like machine learning to eventually achieve general AI.

How AI stands out in different industries.

AI is a booming technology that the global community has accepted. It has been revolutionizing the industry from various sectors for quite some time. It is a comprehensive technology that is being applied in almost every industry. This section discusses how AI is impacting service delivery in various sectors.

Fully self-driving cars are now a reality. Tesla is the first company to make a car with all of the sensors, cameras, and software needed for a computer to drive itself from start to finish. Trucks may be the next primary target for autonomy: self-driving trucks will enormously impact road safety and infrastructure and save companies money by reducing labor costs.

A few other industries are also implementing AI. For example, in finance, AI helps with forecasting and supports hedge-fund investment decisions. Predictive analytics (or forecasting) applies artificial intelligence using machine learning and statistical techniques to make predictions about future events based on previous data. For example, you can use forecasting to predict product sales, customer demand, or even stock prices. One popular example of predictive analytics is Amazon’s product recommendations engine (also known as “Customers who bought this item also bought”). It uses past purchase data from millions of customers to recommend products based on the users’ preferences.

In healthcare, AI is helping doctors to diagnose diseases by gathering data from health records, scanning reports, and medical images. This helps doctors to make faster diagnoses and guide the patient for further tests or prescribe medications. In addition, AI can be used in the treatment process by monitoring patients and alerting their doctors when something goes wrong. According to Forbes, AI will save over 7 million lives in 2035.

In retail, AI does everything from stock management to customer service chatbots. As a result, many businesses are taking advantage of AI to improve productivity, efficiency, and accuracy. In addition, companies find new ways to use AI to make life easier for their customers and employees, from product design to customer service.

The current state of AI-based software systems.

The recent advancements in AI have led to the emergence of a new type of system called Generative Adversarial Networks (GANs), which generate realistic images, text, or audio. Due to their remarkable capabilities, some people are concerned that this technology could replace humans in the future.

GANs are just one example of how AI is changing our lives. This section explores more current AI examples and its applications in software systems such as GPT3, DALL.E, and virtual reality/augmented reality (VR/AR).

AI-based software systems are comprised of many layers such as foundational models, advanced algorithms, and automated reasoning tools. Some of the most popular AI-based systems that use these layers include GPT3, DALL.E, AlphaGo, RoBERTa, and many others.

DALL.E and GPT3 are large-scale models that have achieved remarkable results in computer vision and natural language processing (NLP).

The GPT3 model is an NLP model based on a deep learning algorithm called transformers. It was trained on a corpus of text from Common Crawl and published in 2020. GPT3 uses a large dataset trained in the English language to produce outputs based on the inputted information. The model can be trained to perform any task imaginable, from generating text to solving math problems. Also, we can use GPT3 to generate text, translate between languages, answer questions about images, and more.

The DALL.E model is an image generator based on a deep learning algorithm called variational autoencoders (VAEs). Similarly, DALL.E can be trained using an image dataset to produce images based on the inputted text descriptions. It was trained on datasets such as ImageNet and published in 2021. We can use DALL.E to generate images that match captions or URLs given by users. These models have been developed by OpenAI, which has close ties to the US government and military-industrial complex (MIC).

DeepMind created AlphaGo as a program that would play the ancient game Go without anyone’s help. The game is similar to chess but much more complex due to its simple rules and many possible moves per turn. AlphaGo used reinforcement learning to learn how to play the game better over time by playing against itself repeatedly until it mastered every possible situation that could occur in a game of Go with 100% accuracy.

RoBERTa is an algorithm from Facebook AI Research (FAIR) that uses deep learning techniques to solve problems in natural language processing (NLP), such as sentence classification or machine translation.

The Future of AI. What to expect from AI in the next few years or decades

Artificial intelligence has come a long way, but it’s about to make a huge leap. Artificial general intelligence (AGI), the kind of AI capable of doing any intellectual task that a human being can do, is still a ways off, but we’re already starting to see plenty of progress in other areas of AI. Here’s what you can expect soon:

Artificial Intelligence will make more jobs obsolete as it takes over more and more tasks

The reason why is simple: if you can replace one person with an AGI system, you don’t need one computer to do the work – you can spread it out across thousands or millions of computers. That’s only possible because a general AI system can learn from past experiences and improve itself, meaning that it doesn’t have to be reprogrammed for every new task. In fact, there’s no reason why an AGI system would need humans at all – once it learns enough, it could design its own machines or find ways to automate entire industries.

The advent of AI is transforming the business landscape and changing people’s lives for the better. In the coming years, most industries will see a significant transformation due to new-age technologies like cloud computing, Internet of Things (IoT), and Big Data Analytics. All these factors profoundly influence how businesses operate today and are also finding applications in other areas like military, healthcare, and infrastructure development.

To build an engaging metaverse that appeals to millions of users who want to learn, create, and inhabit virtual worlds, AI must be used to enable realistic simulations of the real world. People need to feel immersed in the environments they participate in. AI is helping to achieve this reality by making objects look more realistic and enabling computer vision so users can interact with simulated objects using their body movements.

Concerns surrounding the advancement and usage of AI

AI is a very powerful idea, but it’s not magic. The key thing to remember about AI is that it learns from data. The model and algorithm underneath are only as good as the data put into them. This means that data availability, bias, improper labeling, and privacy issues can all significantly impact the performance of an AI model.

Data availability and quality are critical for training an AI system. Some of the biggest concerns surrounding AI today relate to potentially biased datasets that may produce unsatisfactory results or exacerbate gender/racial biases within AI systems. When we research different types of machine learning models, we find that certain models are more susceptible to bias than others. For example, when using deep learning models (e.g., neural networks), the training process can introduce bias into the model if a biased dataset is used during training.

However, other machine learning models (e.g., random forests) can be less sensitive to the bias in the data during training. For example, if a dataset contains information about many different variables but only one variable is used to make decisions (e.g., gender), this model will tend to be more biased toward that variable than random forests that consider all variables equally weighted by default.

Other concerns need to be taken into account with the advancement and usage of AI. These include data availability, computational power, and privacy, such as health data. People’s data is needed to develop models, but how do we get such data given how protected health data needs to be.

As artificial intelligence becomes more common, it’s only natural that there are increasing requirements for processing power. As a result, AI researchers use supercomputers to develop algorithms and models on a massive and complex scale.

This is especially true of deep learning, a type of machine learning that uses algorithms to recognize patterns in large data sets like images or sound. The main issue with DL is that it requires enormous computational power. To train a neural network using DL, you need to feed vast amounts of data into the system — for example, thousands or millions of pictures — and then let it figure out how to tell one from another on its own. This training process is complex and laborious, but it is also computationally expensive. Model development can take days or even weeks on a single high-end GPU or CPU capable of delivering lots of computational power. To make matters worse, once you train the model, you need a supercomputer to execute the model at full capacity. Google’s investments in TPUs (Tensor Processing Units) attempt to solve this problem using state-of-the-art hardware technology.

Another source of concern in the development of AI is how automated systems will ultimately be used. For example, should we consider holding corporations responsible for the actions of intelligent machines they develop? Or should we consider holding machine developers accountable for their work?

Conclusion

Artificial intelligence (AI) is the intelligence of machines and the branch of computer science that aims to create it. AI is today’s dominant technology and will continue to be a significant factor in various industries for years to come. As AI systems become more advanced, they are not only poised to disrupt multiple industries with their impact but also raise concerns about how we should handle such incredible power.

This field has evolved a great deal over the years. It has gone from being a subject of popular science fiction to a significant part of our lives today. By examining AI from its past, it is possible to better understand its present and predict its future, as we have done in this article.

Load comments