If you haven’t migrated your workloads to a managed database platform yet, you’re probably still relying on SQL Server Agent for various maintenance and other scheduled tasks. Most of the time, these processes just work. But when it’s time to troubleshoot, it can be cumbersome to get to the root of some problems. In this post, I’ll share some ideas to help you minimize the level of annoyance and tedium when you have to figure out what went wrong with the execution of a job.

Recently, I was investigating a job that ran a stored procedure against multiple databases in sequence, and ultimately failed. Here was the output of the job step as seen in Management Studio’s View Job History dialog:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

Executed as user: sa. ...ation. [SQLSTATE 01003] (Message 8153) Warning: Null value is eliminated by an aggregate or other SET operation. [SQLSTATE 01003] (Message 8153) Warning: Null value is eliminated by an aggregate or other SET operation. [SQLSTATE 01003] (Message 8153) Nonqualified transactions are being rolled back. Estimated rollback completion: 0%. [SQLSTATE 01000] (Message 5060) Nonqualified transactions are being rolled back. Estimated rollback completion: 100%. [SQLSTATE 01000] (Message 5060) ... lots more of that ... Warning: Null value is eliminated by an aggregate or other SET operation. [SQLSTATE 01003] (Message 8153) Warning: Null value is eliminated by an aggregate or other SET operation. [SQLSTATE 01003] (Message 8153) Nonqualified transactions are being rolled back. Estimated rollback completion: 0%. [SQLSTATE 01000] (Message 5060) Nonqualified transactions are being rolled back. Estimated rollback completion: 100%. [SQLSTATE 01000] (... The step failed. |

This wall of text was absolutely useless. The reason? The table dbo.sysjobhistory stores the output in the message column, and this column is nvarchar(4000). If your output is longer than 4,000 characters, then all you’ll see here is a subset. In no case so far has that subset contained the important information I was looking for.

While it is the quick and easy way in a lot of cases, we need to stop relying only on msdb and SSMS to troubleshoot jobs because, sometimes, it isn’t enough.

Log output to a file

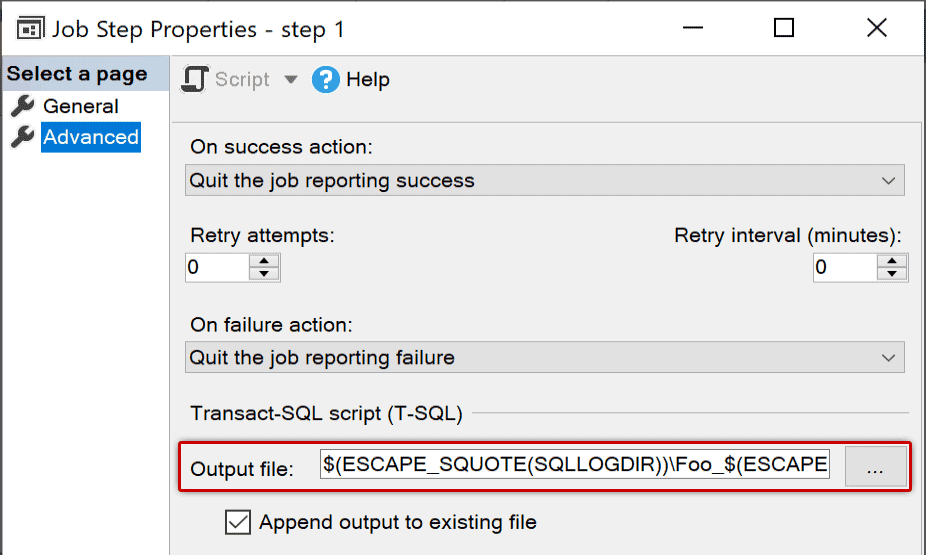

Thankfully, we also log step output to a file, especially when there is any kind of loop (like backing up all databases). When the output might exceed the capacity of agent history, you can send the output to files by going to the Advanced tab of any job step’s Properties dialog and populating an Output File:

Reviewing the output file is much easier and does not have any size limits; it is also easier to script and automate and build parsing tools around. And you can standardize this for all jobs, perhaps to feed the files into an org-wide system like Datadog.

Some people shy away from this option because you have to hard code the path and file name, and they have very different configurations from server to server, making it harder to automate. But this entry supports tokens, so you can tokenize everything from the local SQL Server log directory and the current date and time to the job and step names and IDs. For example, this path:

|

1 2 3 4 5 6 |

$(ESCAPE_SQUOTE(SQLLOGDIR))\ $ESCAPE_SQUOTE(JOBNAME))_$(ESCAPE_SQUOTE(JOBID)) _$(ESCAPE_SQUOTE(STEPID))_$(ESCAPE_SQUOTE(STRTDT)) _$(ESCAPE_SQUOTE(STRTTM)).txt |

Might resolve to:

|

1 2 3 4 |

C:\Program Files\Microsoft SQL Server\150\Log\ JobA_0x0A15AF54FEEECC4BB9A662C8C288D178_1_20240215_123456.txt |

Tokens are a pretty big topic on their own, but you can get a start in the official docs here:

One thing you’ll want to make sure is that the SQL Server Agent account has sufficient permissions, including the important SeAssignPrimaryTokenPrivilege right; see this article for more info:

Without these permissions, the job will still work, but the file will not appear. If you have set an output file but the file never shows up, you can troubleshoot this by reviewing SQL Server Agent’s own error log.

One other note: SQL Server Agent won’t clean up these files for you, so they will pile up. This may become important as systems age, particularly if your jobs have a lot of output.

What else can we do?

Even when you log output to a file, it can still be tedious scrolling through a lot of noise in the log file. You can reduce the noise there with a few techniques:

- If your procedure has a lot of intentional debugging output, use a

@debugflag to print the output by default, but override it from the job step. Or, at least, only output the bad stuff. Or suppress all output by default and only turn it on when really debugging. Some useful tidits from Erik Darling here. - Use

SET NOCOUNT ON;to eliminateDONE_IN_PROCmessages (liken row(s) affected). I talked aboutNOCOUNThere. - For

DBCCcommands that support it, use theWITH NO_INFOMSGSargument.

ANSI_WARNINGS

One of the items you might think is missing from the previous list is ANSI_WARNINGS – this was intentional, as I wanted to treat this option separately. The output I showed above had the following:

|

1 2 3 4 |

Warning: Null value is eliminated by an aggregate or other SET operation. |

You can completely suppress all of this output with the following:

|

1 2 3 |

SET ANSI_WARNINGS OFF; |

But you need to be careful with this. Some code may suddenly break, particularly if you use linked servers. I added this line to the stored procedure I was troubleshooting, and the job then failed with:

|

1 2 3 4 5 6 |

[SQLSTATE 01000] (Error 0) Heterogeneous queries require the ANSI_NULLS and ANSI_WARNINGS options to be set for the connection. This ensures consistent query semantics. Enable these options and then reissue your query. |

I was reminded of this one the hard way.

On the other end

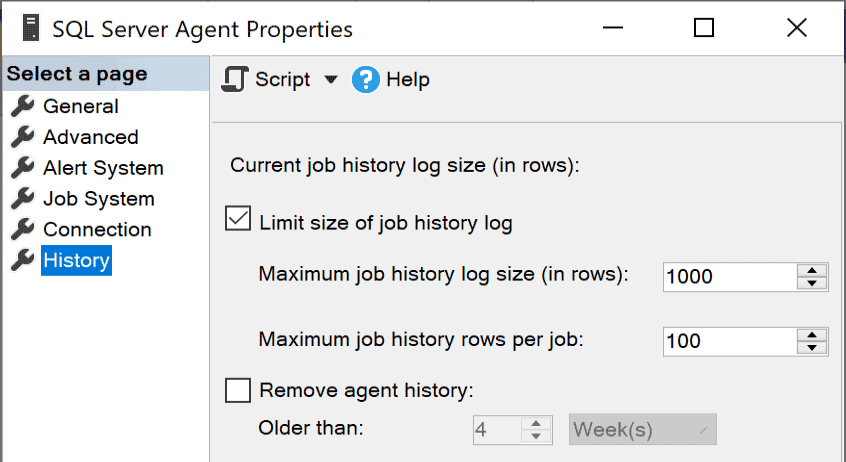

Sometimes the problem isn’t that there is job step history that is incomplete; it’s that there isn’t enough job history available at all. While logging can give you greater control over both what is in job history and how long it’s kept in those files, you may also want to optimize how much history is kept in msdb. You can control this instance-wide using the following SQL Server Agent Properties dialog:

If you have a complex system with some jobs that run every minute and others that run weekly or monthly, this is a very generic brush that will mean some jobs keep way too much history, and others roll away almost immediately. You can be more granular by writing your own process to manage history per job or even to only keep history for job executions that had steps that failed; I wrote about this technique in great detail in “Manage SQL Server Agent History More Effectively.”

Conclusion

There are a lot of little ways you can make reviewing and troubleshooting job history easier and more sensible. Logging job output to a file is an approach that has a huge payoff and is very easy to automate. What techniques have you used to wrangle SQL Server Agent?

Load comments