Once upon a time a SQL Server version that hadn’t row locks. The minimal level of lock was page lock, every time you want to lock a record, an entire page was locked.  At that time we were between the devil and the deep sea: if we choose a clustered index with an ascending key we would create what was called a Hot Spot, all the records would be inserted on the same page, creating a bottleneck. On the other hand, if we create a clustered index with a non-ascending key, we would suffer from index fragmentation and page splits, having huge admin trouble to find out the correct fill factor for each index in order to support the period between the re-index job without too many page splits. (This article explains OPTIMIZE_FOR_SEQUENTIAL_KEY a new 2019 feature to help this issue.)

At that time we were between the devil and the deep sea: if we choose a clustered index with an ascending key we would create what was called a Hot Spot, all the records would be inserted on the same page, creating a bottleneck. On the other hand, if we create a clustered index with a non-ascending key, we would suffer from index fragmentation and page splits, having huge admin trouble to find out the correct fill factor for each index in order to support the period between the re-index job without too many page splits. (This article explains OPTIMIZE_FOR_SEQUENTIAL_KEY a new 2019 feature to help this issue.)

When SQL Server 7 was launched (we haven’t arrived in the year 2000 yet, be patient, please), finally we got the row locks we so much dreamed about! With them, the hot spots were gone and creating a clustered index with ascending key became best practice to avoid index fragmentation, page splits and a lot of admin tasks.

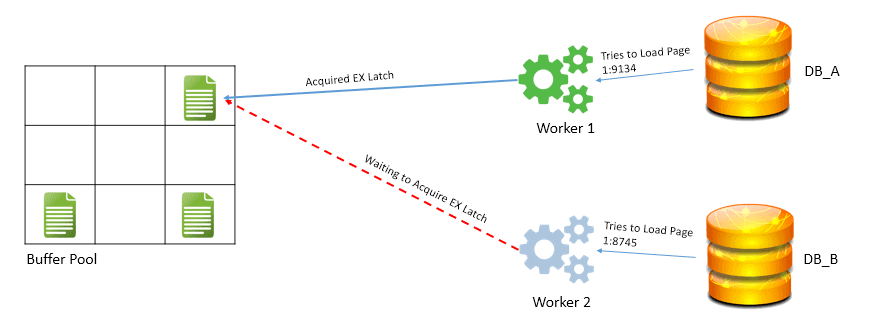

Underneath, the active pages were cached in memory, during a transaction the update was happening in memory (until the commit, which forces the log flush), and the concurrency started to be in the memory. A new server monster was released: PAGELATCH_EX . It’s not a lock, it’s internal SQL concurrency for the use of a resource, in this case, a page. Way lighter than the locks and hot spots we had before SQL Server 7. However, the bottleneck was still there. Lighter, impossible to notice in small workloads, but it was there.

Using our time machine, we can jump to SQL Server 2014. The internet and the increase in the amount of data were making the bottleneck visible again, so Microsoft created an alternative: In-Memory tables. The in-memory tables totally eliminated the latches, but the limitations were high, the first versions not even accepted a table with foreign keys. It was more a feature to be used for data ingestion than for the main tables.

Now, in 2019, Microsoft decides to teach us a bit more about this bottleneck while also improve some workloads that may be suffering from it. What in the past was called hot spot, today is called last page insert contention.

Microsoft is now addressing a problem called convey: sometimes the active thread takes more time to release the latch on the last page then it should. Threads doing some extra work, or the moment of the creation of a new page (which is also called page split), or other activities make a change on the throughput of the data.

For us, the technical solution is simple: if we suffer from this problem, we can apply the option OPTIMIZE_FOR_SEQUENTIAL_KEY on an index and that’s it, the magic is done.

Underneath what’s happening is an unfair thread distribution: SQL Server tries to identify if one thread will keep the page latch for more time than it should and if that’s the case, leave this thread for last, trying to keep a constant throughput on the data insertion.

Sometimes history teaches us. Hot Spot’s, PAGELATCH_EX, conveys, different levels of the same problem.

You can read a lot more about OPTIMIZE_FOR_SEQUENTIAL_KEY, conveys and all the details you can find here

If you liked this article, you might also like Index Usage: finding indexes not being used

Load comments