So, we’ve looked at how a dynamic call is represented in a compiled assembly, and how the dynamic lookup is performed at runtime. The last piece of the puzzle is how the resolved method gets invoked, and that is the subject of this post.

Invoking methods

As discussed in my previous posts, doing a full lookup and bind at runtime each and every single time the callsite gets invoked would be far too slow to be usable. The results obtained from the callsite binder must to be cached, along with a series of conditions to determine whether the cached result can be reused.

So, firstly, how are the conditions represented? These conditions can be anything; they are determined entirely by the semantics of the language the binder is representing. The binder has to be able to return arbitary code that is then executed to determine whether the conditions apply or not.

Fortunately, .NET 4 has a neat way of representing arbitary code that can be easily combined with other code – expression trees. All the callsite binder has to return is an expression (called a ‘restriction’) that evaluates to a boolean, returning true when the restriction passes (indicating the corresponding method invocation can be used) and false when it does’t. If the bind result is also represented in an expression tree, these can be combined easily like so:

|

1 2 3 |

if ([restriction is true]) { [invoke cached method] } |

Take my example from my previous post:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

public class ClassA { public static void TestDynamic() { CallDynamic(new ClassA(), 10); CallDynamic(new ClassA(), "foo"); } public static void CallDynamic(dynamic d, object o) { d.Method(o); } public void Method(int i) {} public void Method(string s) {} } |

When the Method(int) method is first bound, along with an expression representing the result of the bind lookup, the C# binder will return the restrictions under which that bind can be reused. In this case, it can be reused if the types of the parameters are the same:

|

1 2 3 |

if (thisArg.GetType() == typeof(ClassA) && arg1.GetType() == typeof(int)) { thisClassA.Method(i); } |

Caching callsite results

So, now, it’s up to the callsite to link these expressions returned from the binder together in such a way that it can determine which one from the many it has cached it should use. This caching logic is all located in the System.Dynamic.UpdateDelegates class. It’ll help if you’ve got this type open in a decompiler to have a look yourself.

For each callsite, there are 3 layers of caching involved:

- The last method invoked on the callsite.

- All methods that have ever been invoked on the callsite.

- All methods that have ever been invoked on any callsite of the same type.

We’ll cover each of these layers in order

Level 1 cache: the last method called on the callsite

When a CallSite<T> object is first instantiated, the Target delegate field (containing the delegate that is called when the callsite is invoked) is set to one of the UpdateAndExecute generic methods in UpdateDelegates, corresponding to the number of parameters to the callsite, and the existance of any return value.

These methods contain most of the caching, invoke, and binding logic for the callsite. The first time this method is invoked, the UpdateAndExecute method finds there aren’t any entries in the caches to reuse, and invokes the binder to resolve a new method.

Once the callsite has the result from the binder, along with any restrictions, it stitches some extra expressions in, and replaces the Target field in the callsite with a compiled expression tree similar to this (in this example I’m assuming there’s no return value):

|

1 2 3 4 5 6 7 8 9 10 11 12 |

if ([restriction is true]) { [invoke cached method] return; } if (callSite._match) { _match = false; return; } else { UpdateAndExecute(callSite, arg0, arg1, ...); } |

Woah. What’s going on here? Well, this resulting expression tree is actually the first level of caching. The Target field in the callsite, which contains the delegate to call when the callsite is invoked, is set to the above code compiled from the expression tree into IL, and then into native code by the JIT. This code checks whether the restrictions of the last method that was invoked on the callsite (the ‘primary’ method) match, and if so, executes that method straight away.

This means that, the next time the callsite is invoked, the first code that executes is the restriction check, executing as native code! This makes this restriction check on the primary cached delegate very fast.

But what if the restrictions don’t match? In that case, the second part of the stitched expression tree is executed. What this section should be doing is calling back into the UpdateAndExecute method again to resolve a new method. But it’s slightly more complicated than that. To understand why, we need to understand the second and third level caches.

Level 2 cache: all methods that have ever been invoked on the callsite

When a binder has returned the result of a lookup, as well as updating the Target field with a compiled expression tree, stitched together as above, the callsite puts the same compiled expression tree in an internal list of delegates, called the rules list. This list acts as the level 2 cache.

Why use the same delegate? Stitching together expression trees is an expensive operation. You don’t want to do it every time the callsite is invoked. Ideally, you would create one expression tree from the binder’s result, compile it, and then use the resulting delegate everywhere in the callsite.

But, if the same delegate is used to invoke the callsite in the first place, and in the caches, that means each delegate needs two modes of operation. An ‘invoke’ mode, for when the delegate is set as the value of the Target field, and a ‘match’ mode, used when UpdateAndExecute is searching for a method in the callsite’s cache. Only in the invoke mode would the delegate call back into UpdateAndExecute. In match mode, it would simply return without doing anything.

This mode is controlled by the _match field in CallSite<T>. The first time the callsite is invoked, _match is false, and so the Target delegate is called in invoke mode. Then, if the initial restriction check fails, the Target delegate calls back into UpdateAndExecute. This method sets _match to true, then calls all the cached delegates in the rules list in match mode to try and find one that passes its restrictions, and invokes it.

However, there needs to be some way for each cached delegate to inform UpdateAndExecute whether it passed its restrictions or not. To do this, as you can see above, it simply re-uses _match, and sets it to false if it did not pass the restrictions. This allows the code within each UpdateAndExecute method to check for cache matches like so:

|

1 2 3 4 5 6 7 8 9 10 11 |

foreach (T cachedDelegate in Rules) { callSite._match = true; cachedDelegate(); // sets _match to false if restrictions do not pass if (callSite._match) { // passed restrictions, and the cached method was invoked // set this delegate as the primary target to invoke next time callSite.Target = cachedDelegate; return; } // no luck, try the next one... } |

Level 3 cache: all methods that have ever been invoked on any callsite with the same signature

The reason for this cache should be clear – if a method has been invoked through a callsite in one place, then it is likely to be invoked on other callsites in the codebase with the same signature.

Rather than living in the callsite, the ‘global’ cache for callsite delegates lives in the CallSiteBinder class, in the Cache field. This is a dictionary, typed on the callsite delegate signature, providing a RuleCache<T> instance for each delegate signature. This is accessed in the same way as the level 2 callsite cache, by the UpdateAndExecute methods. When a method is matched in the global cache, it is copied into the callsite and Target cache before being executed.

Putting it all together

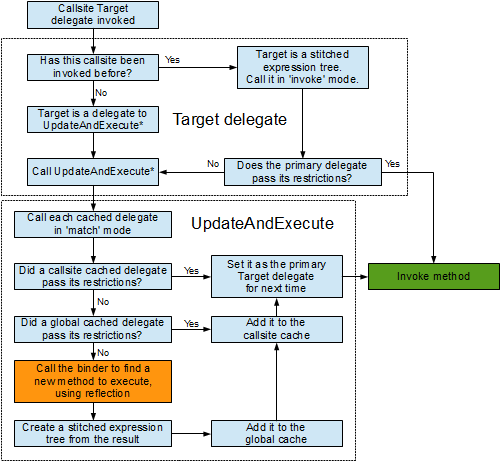

So, how does this all fit together? Like so (I’ve omitted some implementation & performance details):

That, in essence, is how the DLR performs its dynamic calls nearly as fast as statically compiled IL code. Extensive use of expression trees, compiled to IL and then into native code. Multiple levels of caching, the first of which executes immediately when the dynamic callsite is invoked. And a clever re-use of compiled expression trees that can be used in completely different contexts without being recompiled. All in all, a very fast and very clever reflection caching mechanism.

Load comments