Testing Databases: What’s Required?

An overview of the challenges of database testing and test data management, reviewing the different types of database test that need to run during development work, what sort of test data they require, and how to manage all the required data sets, during development, in a way that allows rapid cycles of parallel testing.

This article is part of a series on the requirements and practicalities of database testing, with Flyway:

- Testing Databases: What’s Required?

- Planning a Database Testing Strategy for Flyway

- Test-Driven Development for Flyway Using Transactions

- Basic Functional Testing for Databases using Flyway

- Running Unit and Integration Tests during Flyway Migrations

- Performance Testing Databases with Flyway and PowerShell

- Running Structured Database Tests in Flyway

- Running Database Assertion Tests with Flyway

Also relevant are the series of related articles on Test Data Management.

Database testing, and especially the automation of database tests, is one of the most technically-challenging and interesting aspects of database development, but is both remarkably unpopular and often poorly executed, even in Agile developments. Although it is done well in many industries, there have been spectacular recent failures, most notably in the UK’s TSB Banking disaster.

Initial tests are derived from the business model in that they aim to ensure that the application does what the business has specified. Tests are created and evolved at the same time as development, and tests are added throughout development, as the team’s understanding of the business domain is refined. Alongside the tests themselves, the team need a strategy for generating and managing each of the data sets required to support the various types of tests.

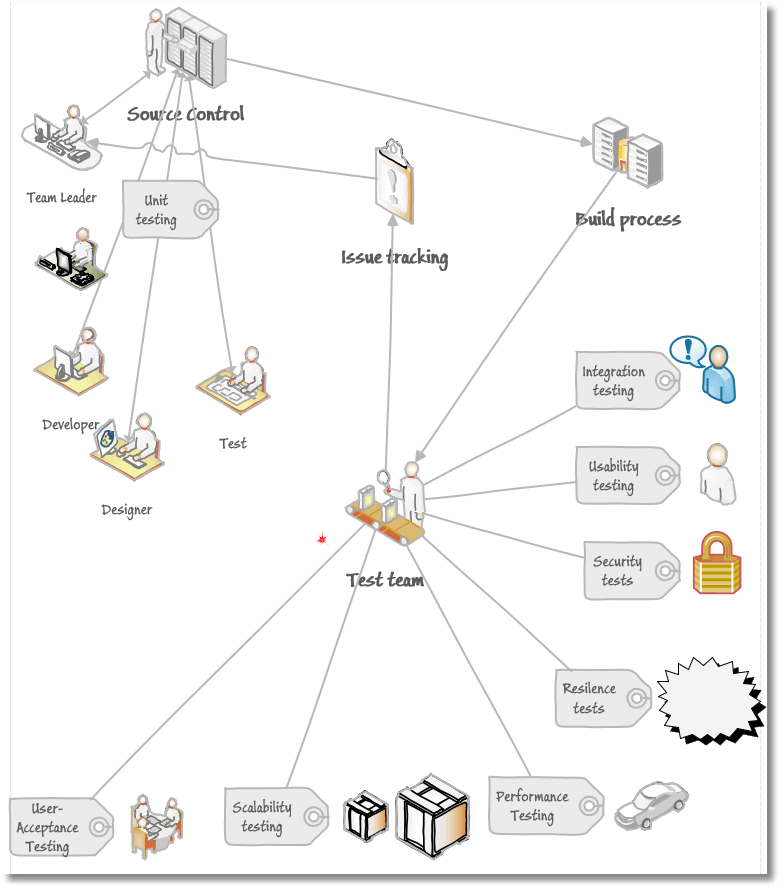

Types of database test: the what, when and how

Database testing is as complex as database development and deserves the necessary resources. There are several activities that are only vaguely related, but which come under the umbrella term of ‘database testing’. Whereas some are done by developers, others will be done by an ’embedded’ test team. Some of the more specialized types of test, such as security testing, are done by entirely different teams. Nowadays, the basic tests must be automated, but you can only run automated tests for problems that you already know about. To cope with unanticipated issues, tests must include continuous exploratory testing that, when it finds bugs, enshrines the associated exploratory test as an automated test.Initial tests are derived from the business model in that they aim to ensure that the application does what the business has specified. Tests are created and evolved at the same time as development, and tests are added throughout development, as the team’s understanding of the business domain is refined.

Database tests will vary in form and complexity depending on the type and purpose of the database. They are generally categorised by their purpose, though it is also useful to distinguish them in other way such as whether they are automated, manual or exploratory.

The following sections summarize are the main categories of testing, according to their purpose.

Unit tests

What:

Unit testing is performed on each routine, in isolation, to ensure that it returns a predictable result for each specific set of inputs that is used. They are required for all modules, such as procedures, functions, views and rules.

The simplest unit tests are those on database objects with no code. A table must, for example, be tested to it has the necessary constraints to ensure that only appropriate data can be inserted into it. Functions or procedures that take parameter lists and produce results are also easy; the unit test will verify that it always returns the expected data, in the expected structure (correct column names and types), for the whole range of possible data or parameter values. Other unit tests might, for example, ensure that aggregations perform rounding of figures in a way that conforms with industry standards. Unit tests will also perform basic resilience testing, to ensure that the module continues to respond correctly even if it encounters ‘dirty’, ‘unexpected’ or ‘difficult’ data, such as the names Mr Null or Mrs O’Brien, or negative currency items.

The easiest tests to automate are those that have no dependencies. The more references made by the object under test, and the more objects to which it refers, the more complicated the unit test, but its objective must remain a simple test of the serviceability of the object.

When:

Unit tests are run throughout development whenever an object is created, and before committing to version control any object changes. No untested code should ever reach the regular build. Unit tests are usually easy to add to a build script for the object. In test-driven development, the test harness precedes the development and is enhanced alongside the development of the object. It often checks the performance of the unit as well as its accuracy.

Test data requirements:

Unit testing is done on small, immutable datasets containing only data that the object can reference directly. These data sets are usually kept with the object source in source control. The developers will wish to include in these datasets many of the edge-case values that are generally added by the resilient/ limit testers into their datasets, in order to avoid any such errors in subsequent tests.

Integration/Regression tests

What:

Integration and regression tests run on every identifiable process, rather than individual objects Integration tests are prepared together with the intended users to conform with the business model and must not change without sign-off. They will validate that a set of objects work together, and the interfaces between them are correctly configured and deployed, so that that they always perform the required process and produce the expected result.

Integration tests are necessary in the same way that the running of a clock is tested as well as the clocks individual components. However splendid a clock’s mechanism may be, it must still always tell the correct time. These tests are best devised by people who are not actively involved in development as they require a different mindset and should be directed by the requirements stated in the business model

When:

Integration tests and regression tests are usually performed on completion of every build. Where builds are regular and frequent, these tests must be scripted. Normally, test staff will also do manual tests, many of them exploratory, that, if successful, will be automated and added to the test bank. These will include checks on any new processes that are introduced in the build.

Test data requirements:

Like unit tests, they use a standard input and must check the output against the ‘correct’ output. For example, when testing the purchase of an item in a ‘basket’, you will agree with the business what should happen, then set up integration tests to prove that every part of the purchasing process works as defined by the business, and all appropriate tables are updated as expected.

The test data should be realistic businesses data that conforms to experience but changes as little as possible because it must be cross-checked by the business to make sure of the validity of the result. Whichever way one automates the integration tests, the results of the tests should be quickly and easily summarized and reported so that developers can quickly be alerted of any issues.

Performance tests

What:

Performance tests are devised to ensure that response time is linear in the face of increasing transactional workload. It checks that the time taken for a business process is within the criterion required and expected by the users, under reasonably predictable workloads. It also checks that data integrity errors or deadlocks do not occur because of a high transactional loading or large number of active sessions.

When:

They are often left to the point at which a release is imminent, in the mistaken belief that performance problems are easily remedied. A better approach is to run performance tests in parallel to integration tests. The report of the results of these tests must have enough detail to allow developers to replicate the issues quickly and correct the code.

Test data requirements:

Data that is close to the live data, in its volume and characteristics, is needed for performance testing. Most important is that the test data is similar enough in distribution to the existing or predicted production data that the released database is unlikely to produce inappropriate execution plans and poorly-executing queries. However, where there is an existing production version of the database, some of this testing can be done by importing just the statistics objects of the live database and checking that the execution plans of the important queries are appropriate when generated against these statistics.

Scalability tests

What:

Scalability tests are devised to ensure that performance isn’t degraded beyond the linear by any increase in the volume, or size, of the data. It is likely to check that no queries show execution plan regressions as the size of the data grows. It can also be used alongside performance tests to plan the operational hardware requirements of the server, by running a consistent size of data on different server configurations.

When:

Scalability tests are like performance tests, but with a narrower remit. They are often left till late in the deployment pipeline, purely because of difficulties, in the past, of providing the necessary bulk of test data. Now that it is relatively easy to generate realistic test data, in bulk, some of the initial scalability tests can be shifted left into development so that any obvious scalability problems can be fixed before the overall database design solidifies.

Test data requirements:

Testing whether the database scales in a linear way just requires bulk rather than verisimilitude, so production data is of little use, as it is difficult to change its size without a great deal of work. Instead, we need a way to generate different volumes of test data, quickly.

Resilience tests

What:

Resilience tests (a.k.a. limit tests) test the response of the application to unpredictable or unusual working conditions, such as intermittent network faults, or partial hardware failure, or the presence of unexpected or ‘dirty’ data. The aim is to determine the likely points of failure and so get a measure of the system’s resilience. They tend to use specialized tools that can playback a variety of simulated usage designed to be difficult to handle easily. It is likely to also test ETL processes and downstream reporting.

When:

Resilience tests are amongst the most difficult database tests to perform because they require a level of manual intervention and so are difficult to automate. In the writer’s experience, resilience tests are usually done by specialist teams late in the pipeline. Limit testers are in short supply, even in the corporate context, and so are often used by several different development teams.

Test data requirements:

As well as simulating unexpected conditions and failures, resilience testing generally uses exploratory techniques that require the use of unpredictable and unusual data in the processes that are run, such as very long or short strings, or unusual currency representations. Data sets are usually generated specially for the purpose, but if you choose to use masked production data, then you’ll need to inject the data edge-cases values into it, because ‘Little Bobby Tables’ is unlikely to get to be inserted into the current production data

Security tests

What:

Security tests concentrate on ensuring that the database introduces no security vulnerabilities, such as by the careless use of dynamic SQL, which can allow unauthorized access to data They generally consist of a mix of exploratory ad-hoc tests and automated penetration tests. The tests evolve from the business model and the appropriate legislative framework.

When:

Exploratory, ad-hoc tests require specialized security knowledge and so are generally performed as part of the release pipeline. The automated penetration tests can be run routinely. If this is done as part of development, any failure can be remedied more quickly, even if it requires extensive redesign, especially where the access-control model is unsustainable or impossible to maintain.

Test data requirements:

Penetration tests are scripted and come with their working data. The data that is used by exploratory testing is generated, as there is no need for live data: it is enough merely to prove that the data has been successfully exposed.

User-acceptance tests

What:

User-acceptance tests ensure that the database is aligned with the business objectives. They are conducted by the customers of the database, usually people from the business who would use the system, developers of applications or downstream reporting systems, as well as training staff. Operations will also check that the database has met all the requirements for maintainability.

When:

It’s unwise to leave UATs until the point of deployment. They should be part of the development process, from early prototypes, to ensure that the database is aligned as closely as possible to the business model, and so that the team can adapt development quickly in response to any changes in business strategy. Early UATs also allow materials for staff training to be prepared well in advance of the release of a new or changed system.

Test data requirements:

Although the data need not be derived from production data, it should be realistic enough that your stakeholders can decide whether the business process you are delivering meets their needs. It’s harder to grasp an example that uses abstract or unrealistic data. The test data sets and test scenarios are usually created in collaboration with the business, with technical help from the testers. The scenarios and test data are likely to be reused for subsequent training and usability tests.

Usability tests

What:

Usability tests force reality upon application and interface designs that appear to be usable but fail to meet expectations, contravene existing legislation for the use of technology in the workplace, or meet requirements for disability. They check that interfaces are documented, appropriate and intuitive. They are often included in UAT but are done by different teams, in different locations, for different purposes, and have their own legislative framework with which to check compliance.

When:

These tests are normally done by asking volunteers who are unfamiliar with the system to follow written ‘scenarios’. The tests are very manual and difficult to automate in any way, but they should be part of development, when remediation is relatively easy. If they are left till later, any required changes take a lot more time and so are often ‘put to one side’, with unpleasant consequences.

Test data requirements:

Usability testing is more relevant to an application using the database but is included because of its requirement for realistic data within the database.

Managing the test data

While the real data is helpful for some types of testing, it’s not required or even appropriate for many others. Unit tests are better done on small, immutable datasets that are retained in development. In fact, ‘live data’ cannot be used here, unless it is immutable. Likewise, integration tests will require an immutable set of “before” and after” data against which to test the outcome of the process. The data should be realistic but does not need to be based on real transactions.

User-acceptance, usability, and some types of performance test are easier if we can use real data. Also, if a bug cannot be reproduced any other way than by using production data, then there is an established case for using it under the appropriate controls (although bugfixes aren’t part of the test activity).

Using the live data for development and testing often comes with data security and privacy risks. In most corporate settings, the classic solution to this problem was to determine which tests can only be done on current production data and have specialist testers, with a high level of security vetting, run the tests in an isolated office within the data centre.

An alternative is to use a data masking tool to ensure that the data is pseudonymized sufficiently to comply with existing legislation, before distribution. However, this still requires additional security measures; for example, the GDPR recommend that pseudonymized personal data be held only in an encrypted form, due to the increasing sophistication of inference attacks.

We can meet many of the requirements for database test data using data generation. Most of the different types of test I’ve discussed can be undertaken on the latest build of the database, stocked with standard sets of ‘development’ data.

For performance testing, we can use faked data as well as masked, anonymized data, but in either case it must match as closely as possible the real distribution. If you have sign off under data protection requirements, the easiest way is to anonymize the real data, in a way that retains its correct distribution, using a tool like Data Masker.

Alternatively, data generation is becoming more sophisticated and is now able to closely match more closely the distribution of live data. For scalability testing, it has the advantage of allowing datasets of any size to be produced. Ideally, we’ll see the same execution plans, when testing on similar volumes of data, and we can check this by import of statistics objects from the live database.

Managing data sets between database versions

However, we produce the test data, one challenge is ensuring it always keeps pace with metadata changes. The development data must match the version of the database to be built, so that the automated build never fails.

If, for example, a group of interrelated tables are changed between version 4.59 and v 4.60, then the data for all affected objects must change at that point too. In Managing Datasets for Database Development Work using Flyway, I explain a generic way of managing different data sets, for database development work and ensuring the data is always correct, for the database version under test.

Technologies for managing test cells

Data for database development has been an expense, because of the scale of network resources required to meet the needs of a development team. It has also suffered from the problem that importing and exporting data has always been a slow and excessively-manual process. To make matters worse, the integration and build process has, in the past decade, been condensed from months to hours, and an overnight database build from source control is now common.

There are, therefore, two growing pressures. The size of data is increasing, and the time between builds is decreasing. To meet test requirements, these tests are increasingly organised in ‘cells’ that require a group of database servers running tests in parallel. This has increased the total network storage requirement greatly. To get around these difficulties, various techniques have been tried. For example:

- Virtual servers, usually cloud-based – these can be scaled up for a test run and then taken down afterwards. Unfortunately, this requires the movement of data between on-premise storage and the cloud, which is far slower than local on-premise hosting.

- On-premise use of Virtual machine hosting – this has generally provided a more adaptable solution, but the problem remains that the data still must be repeatedly copied to every development and test machine on every build.

- Containers – these can simplify the process in some cases where the working database system requires a complex multi-database configuration with precise server setup requirements, but their demands on data storage are usually greater than a virtual server.

- Database Clones – Cloning technology allows many instances to share one copy of the original data. This side steps neatly the data problem of needing to copy data out to every server that needs it. SQL Clone uses Windows Virtual Hard Disk (vhdx) technology and is SQL Server-specific. Redgate Clone offers cross-RDBMS cloning, based on Kubernetes clusters. The ‘containerized clones’ (data containers) combine the advantages of container provisioning with a lightweight data footprint.

With database clones, a test call can be provisioned in a few minutes with each database being a mere fraction of their apparent size, and we can easily automate the process of deploying and reverting, customizing and deleting old clones and images safely, prior to refreshing.

Conclusion

Even in the most Agile start-up, database tests need to be present even if for no better reason than the fact that the legislation for personal and financial data makes no concessions for scale.

If test data is to be realistic in its nature and in its volume, while meeting the requirement of a rapidly reducing development cycle, the technology must be appropriate. To meet the increasing demands for security and data protection, a variety of approaches are required in order to provide the appropriate test data, Any development team will be wise to have all the necessary tools handy, including masking, pseudonymization and generation, so that they can produce data that meets the full range of requirements, for every type of testing or debugging that is required.

To ensure that we meet all the requirements of testing, we must accept the importance of automated testing at all stages. This means that the appropriate test data must be easy to prepare. The test process in general must be as undemanding as possible. If it is a monotonous chore, it becomes tempting to deny its obvious importance in database development and skimp on its use. Testing, like development, must be done in parallel and requires the servers and services to allow this to happen. The test function deserves the resources to allow this to happen. Only if done properly does it become a rewarding part of the development process.