Docker has gained popularity as a containerization platform that allows you to develop, deploy, and execute applications faster. It packages applications and their dependencies into standardized entities known as containers. These containers are lightweight, portable, and capable of operating independently. Containers allow developers to build, deploy, and manage applications in different working environments. In addition, containers can be used for both development and production.

Docker logging is an essential process in managing containers within a production setting. It enables the monitoring and troubleshooting of applications. This facilitates the detection and resolution of potential issues. When you implement Docker logging you can easily identify and fix issues that may arise when building Docker containers.

For logging to work properly, it’s important to capture logs from the application itself. Additionally, you can also capture on the host system, and the Docker service. When you implement a combination of logging techniques, it enables efficient logging of Dockerized applications.

In this two-part series, we will discuss Docker logging from the basic concepts to advanced concepts. In this part 1, we will cover what is Docker Logging, Docker logging drivers, and why it is important to perform Docker logging when building containers. By the end of the article, you will be familiar with what is Docker Logging and its importance when building Docker Containers as a DevOps engineer.

So, let’s get started!

What is involved with Docker Logging?

Docker logging involves the collection, storage, and organization of log information that applications generate within Docker containers. As applications execute within Docker containers, they generate diverse log messages. These log messages are both standard output (stdout) and standard error (stderr) streams. Docker’s logging mechanisms facilitate the storage and retention of these logs. The log messages serve various purposes such as monitoring, diagnosing issues, debugging, and ensuring auditability.

Logging Drivers

Docker offers various logging drivers that dictate the storage location and format of log messages. These logging drivers are listed below:

json-file

The json-file logging driver writes container logs in JSON format to local files on the Docker host. Each log entry is formatted as a JSON object, providing structured data for easy parsing and analysis.

It’s suitable for environments requiring local log storage with structured log data. Enables seamless integration with log management tools supporting JSON log formats.

syslog

The syslog logging driver forwards container logs to the syslog daemon on the Docker host, allowing logs to be further processed and forwarded to remote syslog servers or stored locally.

It’s deal for integrating Docker logs with existing syslog infrastructure or centralizing log management using syslog-compatible tools and services. Enables standardized log collection and aggregation.

Journald

The journald logging driver sends container logs to the systemd journal on the Docker host, providing centralized logging capabilities alongside system logs. Logs are stored and managed within the systemd journal.

It’s suitable for environments leveraging systemd journal as the primary logging mechanism. Facilitates centralized log analysis and management alongside system logs.

Fluentd

The fluentd logging driver streams container logs to an instance of Fluentd, a robust log collector and aggregator. Fluentd can process and route logs to various destinations, including Elasticsearch, Kafka, or cloud storage.

It enables flexible log routing and aggregation in Docker environments, supporting centralized log processing and storage. Ideal for organizations with complex log management requirements and diverse log destinations.

Awslogs

The awslogs logging driver sends container logs directly to Amazon CloudWatch Logs, a managed log management service provided by AWS. Logs are stored, analyzed, and monitored using CloudWatch Logs features.

It’s designed for seamless integration with AWS environments, allowing container logs to be centrally managed and monitored alongside other AWS services. Facilitates comprehensive log analysis and monitoring within the AWS ecosystem.

Gelf

The gelf logging driver forwards container logs to a Graylog Extended Log Format (GELF) endpoint, typically a Graylog server. GELF format provides features such as structured logging and log enrichment.

It’s suitable for organizations utilizing Graylog for centralized log management and analysis. Enables structured log processing and visualization, facilitating efficient log search and analysis.

logentries

The logentries logging driver streams container logs to the Logentries service, a cloud-based log management and analytics platform. Logentries provides features for log aggregation, search, and visualization.

It’s ideal for organizations leveraging Logentries for centralized log management and analysis. Facilitates real-time log monitoring, alerting, and troubleshooting in Docker environments.

Splunk

The splunk logging driver sends container logs directly to Splunk Enterprise or Splunk Cloud, enabling centralized log management and analysis. Splunk provides features for log indexing, search, and visualization.

Designed for seamless integration with Splunk for enterprise-grade log management and analysis. Enables organizations to leverage Splunk’s powerful log analytics capabilities for Docker environments.

Default Logging Option

Docker containers use the json-file logging driver as the default option. This option stores logs directly on the Docker host’s local filesystem. Nevertheless, many organizations prefer centralized logging solutions, which consolidate logs from numerous containers and hosts. This approach streamlines the analysis and administration of log data, enhancing operational efficiency.

Why It’s Important to Perform Docker Logging When Building Containers

In this section, we will discuss why it’s important to perform Docker logging when building containers. The following are some of the benefits of Docker logging:

Monitoring Application Health

Docker logging allows developers and administrators to monitor the health and performance of applications during the build process. Developers can ensure that the application initializes correctly. It also ensures dependencies are installed successfully, and configuration settings are applied as intended.

Monitoring application health during the build process helps identify any potential issues early on. This allows for timely troubleshooting and resolution.

In this example, we’ll use Python’s built-in logging module to log important events in a Flask web application. We’ll implement logging directly into the application code to monitor HTTP requests and application errors.

The first step is creating the Flask application and configuring the logging logic:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

# app.py from flask import Flask, request import logging app = Flask(__name__) # Configure logging logging.basicConfig(level=logging.INFO) @app.route('/') def index(): app.logger.info('Handling index request') return 'Hello, World!' @app.route('/error') def error(): app.logger.error('An error occurred') return 'Internal Server Error', 500 if __name__ == '__main__': app.run(debug=True) |

Next, let’s create a Docker container for the Flask web application:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

# Dockerfile FROM python:3.9 # Copy application code COPY app.py /app/ # Set working directory WORKDIR /app # Install Flask RUN pip install Flask # Expose port EXPOSE 5000 # Command to run the application CMD ["python", "app.py"] |

Build and run the Docker container using the following commands:

|

1 |

docker build -t <image-name/tag> . |

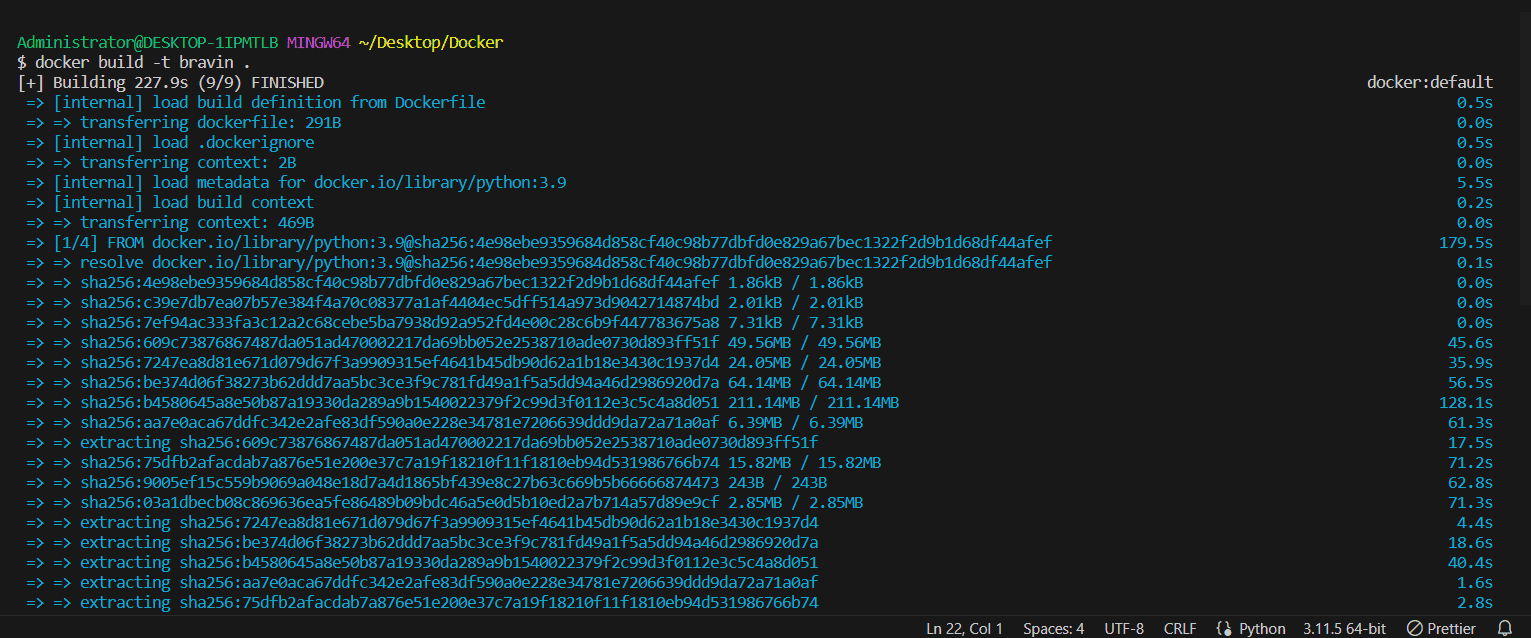

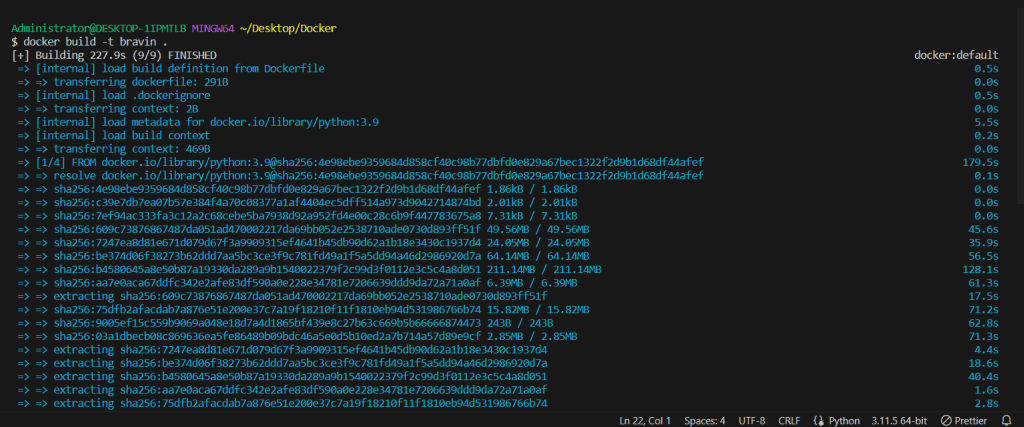

Output:

|

1 |

docker run -p 5000:5000 <image-name/tag> |

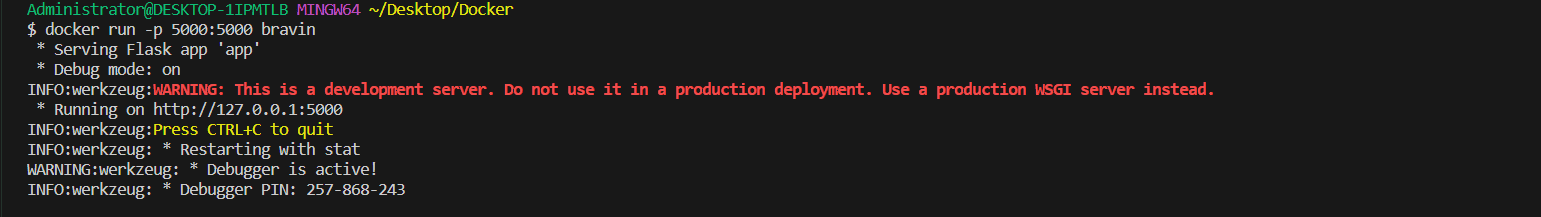

Output:

To view logs from a running Docker container for the Flask web application use the following command:

|

1 |

docker logs [OPTIONS] CONTAINER |

Output:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

$ docker logs mystifying_germain /docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration /docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/ /docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh 10-listen-on-ipv6-by-default.sh: info: Getting the checksum of /etc/nginx/conf.d/default.conf 10-listen-on-ipv6-by-default.sh: info: Enabled listen on IPv6 in /etc/nginx/conf.d/default.conf /docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh /docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh /docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh /docker-entrypoint.sh: Configuration complete; ready for start up 2024/04/15 11:44:22 [notice] 1#1: using the "epoll" event method 2024/04/15 11:44:22 [notice] 1#1: nginx/1.25.2 2024/04/15 11:44:22 [notice] 1#1: built by gcc 12.2.1 20220924 (Alpine 12.2.1_git20220924-r10) 2024/04/15 11:44:22 [notice] 1#1: OS: Linux 5.10.16.3-microsoft-standard-WSL2 2024/04/15 11:44:22 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 1048576:1048576 2024/04/15 11:44:22 [notice] 1#1: start worker processes 2024/04/15 11:44:22 [notice] 1#1: start worker process 30 2024/04/15 11:44:22 [notice] 1#1: start worker process 31 2024/04/15 11:44:22 [notice] 1#1: start worker process 32 2024/04/15 11:44:22 [notice] 1#1: start worker process 33 2024/04/15 11:44:22 [notice] 1#1: start worker process 34 2024/04/15 11:44:22 [notice] 1#1: start worker process 35 2024/04/15 11:44:22 [notice] 1#1: start worker process 36 2024/04/15 11:44:22 [notice] 1#1: start worker process 37 |

When running a Flask application, the default behavior for logging is to write logs to specific files, such as access.log and error.log, located in the /var/log/python directory. However, to facilitate Docker’s logging management capabilities, the Python configuration within the container has been altered. This modification directs access logs to /dev/stdout and error logs to /dev/stderr instead. Using this configuration, Docker can effectively collect and handle the application logs. Furthermore, the access logs are formatted in JSON to simplify their usage with various log management tools.

Alternatively, the official Python Docker image uses a different strategy to achieve the same outcome. It establishes symbolic links from /var/log/python/access.log to /dev/stdout and from /var/log/nginx/error.log to /dev/stderr. These approaches ensure that the logs the Flask application produces are readily accessible through the Docker CLI. This provides flexibility in managing and analyzing log data.

Diagnosing Issues

Logging can offer valuable insights into encountered errors or warnings while installing dependencies, compiling applications, or performing other tasks. This streamlines the debugging process. It also contributes to ensuring the accurate and dependable construction of containers.

For diagnosing issues during the build process, let’s log the output of npm commands in a Node.js application.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

// index.js const express = require('express'); const app = express(); // Logging console.log('Starting application...'); app.get('/', (req, res) => { res.send('Hello, World!'); }); app.get('/error', (req, res) => { console.error('An error occurred'); res.status(500).send('Internal Server Error'); }); const PORT = process.env.PORT || 3000; app.listen(PORT, () => { console.log(`Server running on port ${PORT}`); }); |

Next, let’s create a Docker container for the Node.js application:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

# Dockerfile FROM node:14 # Copy application code COPY index.js /app/ # Set working directory WORKDIR /app # Install Express RUN npm install express # Command to start the application CMD ["node", "index.js"] |

Build and run the Docker container using the following commands:

|

1 |

docker build -t <image-name/tag> . |

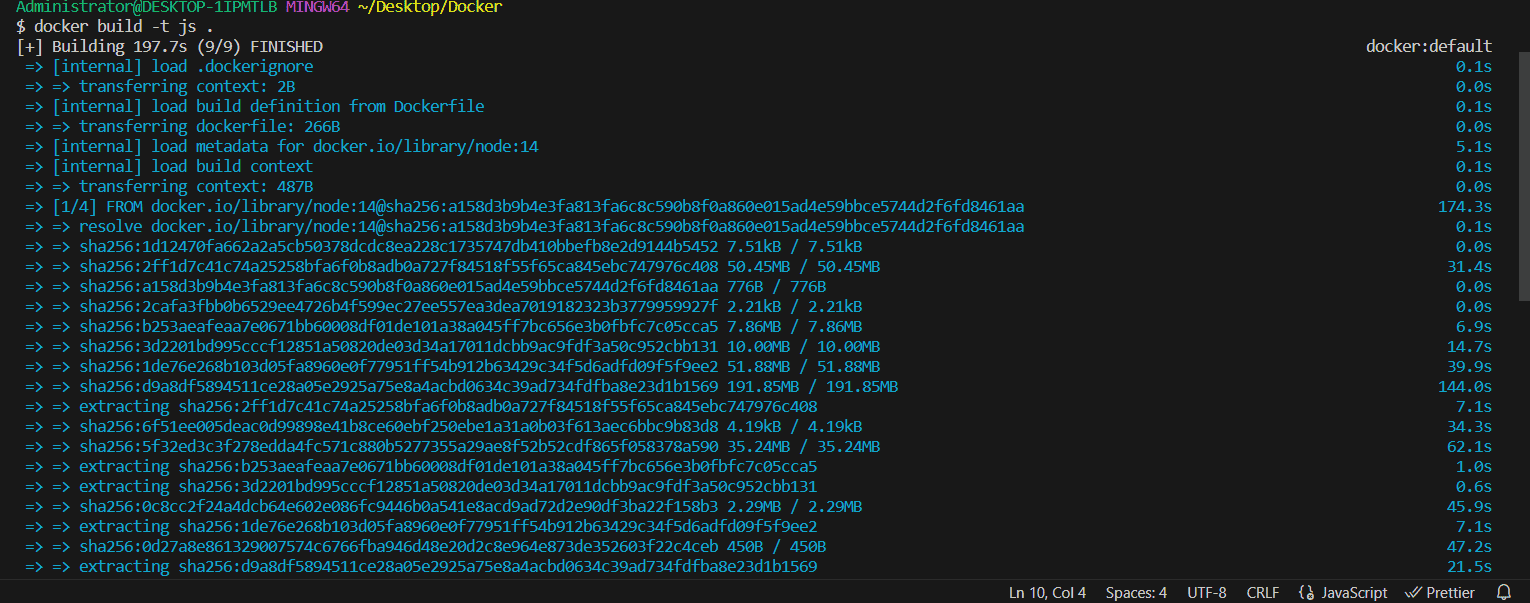

Output:

Then excute:

|

1 |

docker run -p 3000:3000 <image-name/tag> |

And this will output:

|

1 2 3 |

$ docker run -p 3000:3000 js Starting application... Server running on port 3000 |

To view logs from a running Docker container for the Node.js application use the following command:

|

1 |

docker logs [OPTIONS] CONTAINER |

Output:

|

1 2 3 |

$ docker logs romantic_bhaskara Starting application... Server running on port 3000 |

Ensuring Security Compliance

Docker logging during the container build process can ensure security compliance. When logging security scans, you easily can detect potential security vulnerabilities or misconfigurations. You can then rectify them before deploying containers in production environments.

To enable security compliance, we’ll log the output of security scans in a Java application.

|

1 2 3 4 5 6 7 8 9 |

// Main.java public class Main { public static void main(String[] args) { // Logging System.out.println("Starting application..."); // Main application logic // ... } } |

Next, let’s create a Docker container for the Java application:

|

1 2 3 4 5 6 7 8 9 10 |

# Dockerfile FROM openjdk:11-jdk # Copy application code COPY Main.java /app/ # Set working directory WORKDIR /app # Compile Java code RUN javac Main.java # Command to start the application CMD ["java", "Main"] |

Build and run the Docker container using the following commands:

|

1 2 |

docker build -t <image-name/tag> . docker run -p <port>:<port> <image-name/tag> |

Output:

|

1 |

Starting application... |

To view logs from a running Docker container for the Java application:

|

1 |

docker logs [OPTIONS] CONTAINER |

Output:

|

1 2 |

$ docker logs CONTAINER Starting application... |

In each example, logging is incorporated directly into the application code to monitor important events, diagnose issues, and ensure security compliance. These logs have been viewed using the docker logs command. The docker logs commands provide valuable insights into application behavior and facilitate debugging. It also monitors efforts throughout the container lifecycle.

Conclusion

In conclusion, Docker logging is a critical aspect of managing containerized applications efficiently. In this article, we discussed the basic concepts of Docker logging such as what is Docker Logging, Docker logging drivers and why it important to perform Docker logging when building containers.

That’s all for this article, see you in the next article. In the next article which is a part 2, we will cover more advanced Docker logging concepts and best practices. See you in the next article.

Load comments