I recently had an issue where an overnight job that had a history of working successfully on a reliable basis suddenly started failing more often than being successful. We had recently imported a lot of data into the database (about a 10% increase across most tables) and had taken the opportunity to review and effect changes in the indexing on one key table. The system was working as expected, users were happy and all performance stats were meeting or exceeding baseline metrics. With this positive feedback, I could not believe that the indexing was an issue although I did run some analysis on contention that came up blank, just to make sure!

The error in the SQL Agent job was:

Msg -2, Level 11, State 0, Line 0 Timeout expired. The timeout period elapsed prior to completion of the operation or the server is not responding.

This put the pressure back on the index changes as being the cause, somehow the stats were so bad that queries were timing out? I scheduled the same index analysis to run concurrently with the over-night job to see if that was an issue and it came back clean, no contention at all but again the job timed out. As part of the job, there are points in the code that write out to a logfile as it reaches various stages. Using this output we easily identified the particular stage that was failing and investigated the T-SQL involved.

I do not have the exact table structure available here so from this point on we will be referencing a mock-up of the circumstances that I created on my laptop. There are two instances involved, one for OLTP and one for OLAP, and two databases, one that is effectively a data warehouse and the other supports the main CRM system. For our purposes, the OLTP instance on my laptop is named CRAGGYISLAND\PAPER holding the SIMPLETALK12 database and the OLAP instance is called CRAGGYISLAND\ROCK_2008 holding the SIMPLETALK database.

The problem TSQL was being executed on ROCK_2008 and was referencing a single table on PAPER as well as one local table. The code had two phases;

1 – to select row IDs from the SIMPLETALK12 database using a join between the two tables and then to use those rows in

2 – an update to alter one column in the table in SIMPLETALK12. The idea being that having the row IDs would speed up the update. Indeed, executing the SELECT collected the correct 40 rows consistently in less than 1 second.

In order to follow along you will need two code windows open in SSMS, each one connected to a different instance and have the instances configured as linked servers. You only actually need one linked server as we will be observing the OLAP instance connecting to the OLTP instance to collect and update the data.

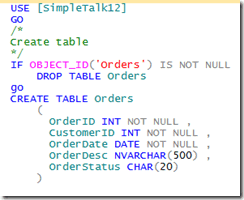

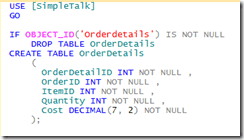

Create the OLAP table:

Create the OLTP table:

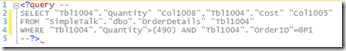

I used SQL Data Generator to insert 10,000 records in the SIMPLETALK12.dbo.Orders table on PAPER and 1,000,000 records in the SIMPLETALK.dbo.OrderDetails table on ROCK_2008. This gives me enough data that this TSQL

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

SELECT [o].[OrderID] , [o].[CustomerID] , [o].[OrderDate] , [o].[OrderDesc] , [od].[Quantity] , [od].[Cost] FROM [CRAGGYISLAND\PAPER].simpletalk12.[dbo].[Orders] AS o INNER JOIN [CRAGGYISLAND\ROCK_2008].[SimpleTalk].[dbo].[OrderDetails] AS od ON [o].[OrderID] = [od].[OrderID] WHERE [o].[OrderDate] > '20111031' AND [o].[OrderStatus] = 'Pending' AND od.[Quantity] > 490 |

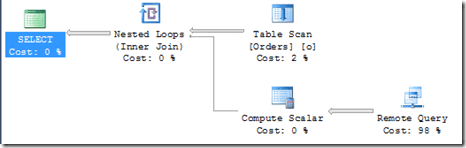

takes about 40s to complete and brings back one row and the query plan is:

apparently a lot of work happening within the remote query. From the OLAP instance there is no way to see how the OLTP instance is actually executing the code so we need to move to the other code window and, using the ever-useful sp_whoisactive, capture the running code. Luckily it takes a few seconds to complete so there is time to move to the other editor and execute this-

|

1 |

EXEC master.[dbo].[sp_WhoIsActive] @get_plans = 1; |

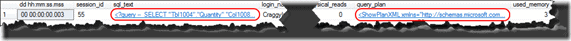

The results of this let us examine the executing TSQL and the query plan being used by simply clicking on the highlighted areas

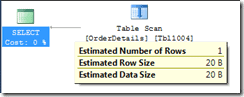

This shows the TSQL being executed on the OLTP instance is

with a query plan of

It’s a table scan but with an estimated row count of 1 so this seems reasonable.

Back to our investigation, it was clear that the SELECT wasn’t the cause of our problem so we ran the update on our test server and again captured the details of how the query was being executed on the remote(OLTP) instance.

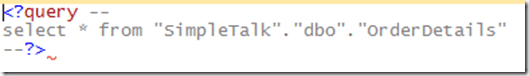

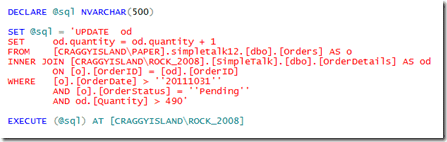

The code from the OLAP instance was

|

1 2 3 4 5 6 7 8 9 |

UPDATE od SET od.quantity = od.quantity + 1 FROM [CRAGGYISLAND\PAPER].simpletalk12.[dbo].[Orders] AS o INNER JOIN [CRAGGYISLAND\ROCK_2008].[SimpleTalk].[dbo].[OrderDetails] AS od ON [o].[OrderID] = [od].[OrderID] WHERE [o].[OrderDate] > '20111031' AND [o].[OrderStatus] = 'Pending' AND od.[Quantity] > 490 |

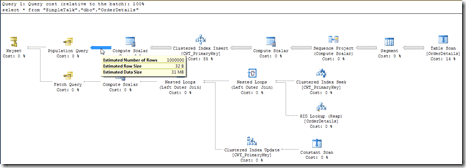

exactly the same predicate filter, simply updating one column to a new value but when we captured the TSQL on the OLTP instance it was a whole world of difference

No predicate to filter the rows and no column list to reduce the data collected and, according to the query plan all of this is coming off the OLTP disks and straight into a temporary table on the OLAP instance disks.

The left hand operator here seems to indicate a reduction in the rows being processed but looking at the actual plan on from the OLAP instance we see the 1M rows are present from the remote Query operator

Now, keep in mind that our live situation was on a table with many more columns and 10M rows and you can see why our query was timing out.

Well, that’s all very interesting but what is the solution?

In this instance we have employed a dynamic SQL solution and used a feature of the EXECUTE statement that I haven’t used before. You can direct the TSQL to execute at a remote location.

This change resulted in the query completing in under 10s rather than timing out on us – a substantial saving. Another option would be to try using OPENQUERY and then use the method that best suits your circumstances and makes life easier for your SQL Servers.

Load comments