How to build multiple database versions from the same source: object filters

How to use filters in SQL Compare to avoid deploying objects that exist only in the source to databases where they may not be required or appropriate.

I’ve worked with several companies who maintain databases in multiple data centers, each one holding the data for a particular region, and each database serving multiple applications.

Over time, for various business or legal reasons, it often becomes necessary to apply updates to certain individual data centers. The right, but hard, way to do this would be to make the required changes to a ‘master’ database, in version control, and then deploy them out to all data centers.

However, sometimes the engineers are just too busy keeping up with demand to find the time to do this, especially when the changes aren’t necessarily required in each data center. As a consequence, I often see that updates and fixes have been applied to some data centers but not to others. Over time, these data centers become out of sync with each other, with the ‘canonical source’ of the database in source control (if such a source exists).

Inevitably, there comes a time when these decisions, made in order to support a company’s pace of growth, come back to bite. Objects will exist in some databases, but not others. Objects will exist in multiple databases, but be different in each. As a result, managing change becomes difficult. Automated database deployments become too risky and complicated, so it will all be done manually, environment by environment, but that’s slow. Manual deployment processes don’t scale.

Last month, one such organization asked me for help. This article explains how we managed to re-establish a single source of truth, in source control, despite the fact the company had multiple different versions of the databases running in production. This was the company’s first step towards a credible Database Lifecycle Management (DLM) process to manage changes more reliably and efficiently.

It shows how to use filters to avoid deploying objects that exist only in the source to environments where they may not be required or appropriate. It assumes, for the time being, that all objects that exist in source and target are identical. My next article shows how to handle cases where they are not, and the third in the series shows how to build multiple database versions from the same source using pre-deploy migration scripts.

The solution I’ll demonstrate, while to some extent ‘hiding’ the problem, provides a path back towards automated, reliable database deployments, until it is possible to schedule in time to reconcile all environments fully. It also provides visibility of the state of the inconsistencies, which will help developers/DBAs to avoid making the problem worse. It should also help when refactoring the production databases back to a standard version.

Step 1 – Work out what sort of differences exist between databases in different environments

To help demonstrate the concepts, I’ll use my little example database, ponies. This database is called Twilight Sparkle in one environment, and Given in another. In each environment, the database has its own unique customizations. In a subsequent article, I’ll discuss how to scale this solution to more than two databases.

We start with Twilight Sparkle and Fluttershy already created with some differences. We do not yet have a ‘master’ development database (see step 3). To create Twilight Sparkle and Fluttershy for yourself, execute this script against a development SQL Server instance.

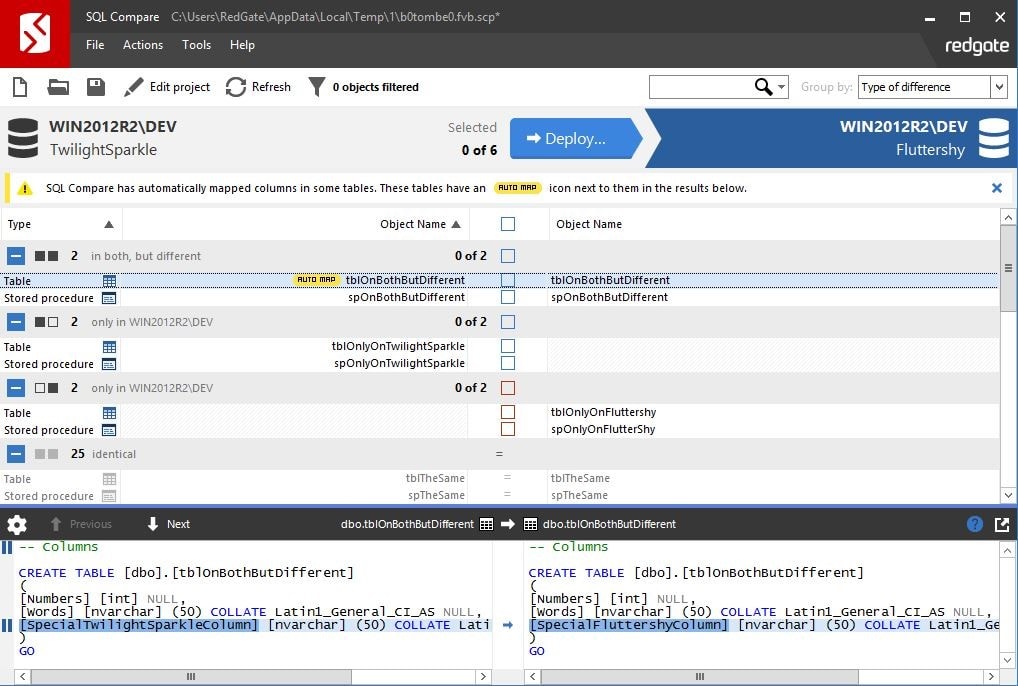

After that, the first simple step is to run SQL Compare between the two databases and look at the results. Figure 1 shows the results for a comparison between Twilight Sparkle and Fluttershy.

Figure 1

SQL Compare tells us that:

- Some objects exist in both

FluttershyandTwilight Sparkle, but are different - Some objects exist only in

Twilight Sparkle - Some objects exist only in

Fluttershy - Some objects are identical

We can ignore the stuff that is identical. This leaves object differences that we can split into two classes:

- Exist only in one environment

- Exist in both environments, but are different

Step 2 – Sync up what you can

Often you will find that many of the ‘exists in both but different’ class of differences will be easy to fix. For example, you may find users and roles that should be in sync aren’t. Are some stored procedures different only due to comments? Before trying to solve hard problems, spend some time looking for easy fixes with SQL Compare and sync up what you can. The fewer differences between the databases the easier this is going to be.

Make a special effort to get your tables in sync, wherever possible, because table differences make deployments much more complicated to manage reliably, as we’ll discuss in a subsequent article.

For example, perhaps tables are showing as different simply because the table column orders don’t match; if so fix it. Perhaps a column exists in a table in one environment but not in the same table in another. Where possible it’s generally best to deploy an empty column to a table to bring the schemas in sync, even if the column won’t be used.

Step 3 – Create a master dev environment

When you have objects that exist in a database in one environment, but not in the same database in a second environment, then you probably want all the objects in source control, but you want to filter them out at deployment time.

First, pick a master database, typically the most important one, the one which you most often treat as the source of truth, or perhaps the one that changes most frequently. For example, if you were to create a new environment you might start by copying an existing environment. The database in whichever environment you would copy should probably be your master database.

In your dev environment, create an empty copy of your master database. I’ll use Twilight Sparkle for my master database (she is the Ruler of the Castle of Friendship after all), and I’ll call my dev database Ponies_dev.

Using SQL Compare, run a comparison between Twilight Sparkle (the source database) and Ponies_dev (the target database), and deploy all the objects to Ponies_dev.

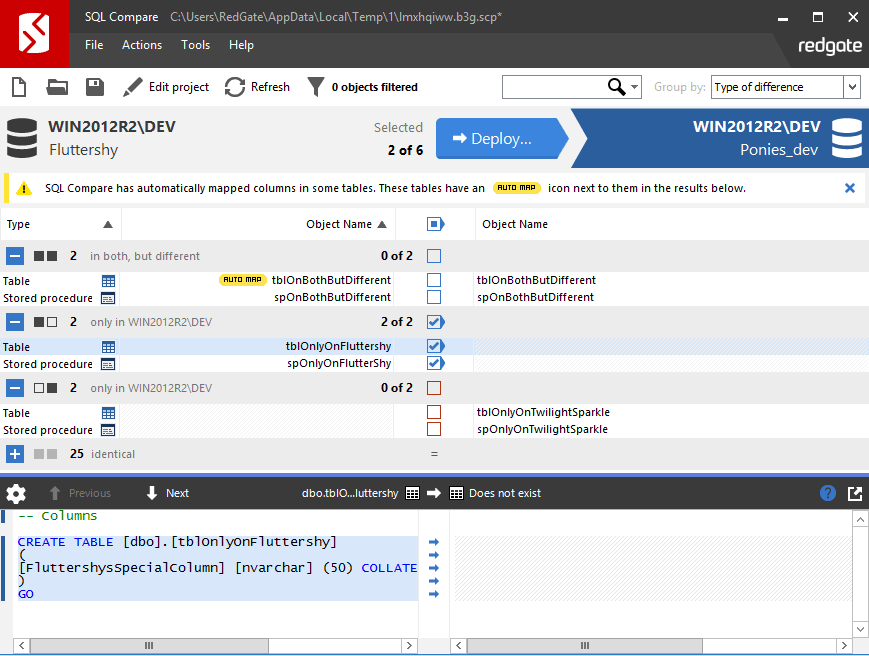

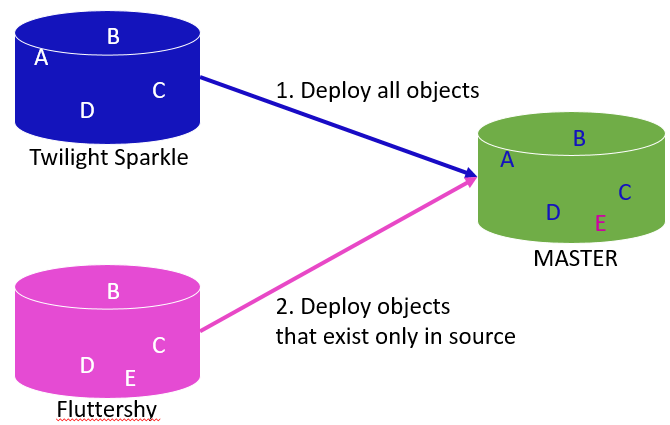

Next, run a comparison between Fluttershy and Ponies_dev, and deploy to Ponies_dev the database objects that exist only in Fluttershy, as shown in Figure 2.

Figure 2

The result should be a Ponies_dev database that includes all the objects that appear in either database. Where there are still objects that exist in both environments, but are different, then Ponies_dev will have the master version of each of those objects (as they exist in Twilight Sparkle).

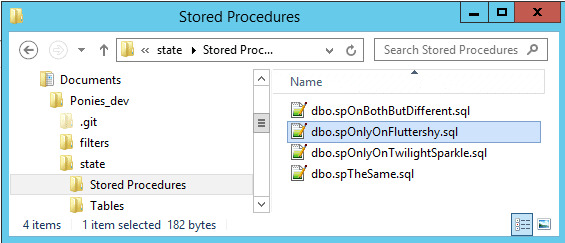

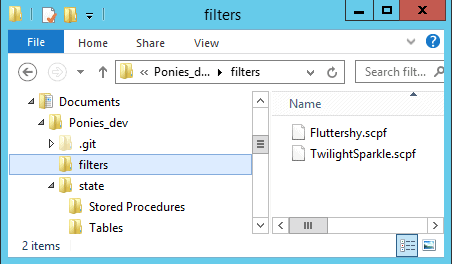

We can add the Ponies_dev database to version control using Redgate SQL Source Control, but ensure that you do not save your database scripts in the source control root because we’ll be adding more scripts to source control later and we want to keep them separate from the scripts maintained by SQL Source Control. I’ve created a local Git repository called Ponies_dev in My Documents. My database scripts are saved in a sub-directory: Documents/Ponies_dev/state.

Figure 3

Step 4 – Use SQL Compare filters to control which objects to deploy where

Figure 4 shows a simplified depiction of what we’ve achieved so far.

Figure 4

For this article, we’re going to assume that in Step 2 we managed to reconcile any differences between objects that exist in multiple environments but are different in each. In Figure 4, this means that objects B, C and D are now identical in all environments. Therefore, if we subsequently modify object B in the ‘master’ development database, we know exactly what changes SQL Compare should make to each target database to which we wish to deploy that change.

Of course, if object B is different in different environments, then when you use ponies_dev or source control as the source for your deployments, you will always deploy the master (Twilight Sparkle) version, which is almost certainly not what you want. We’ll deal with this sort of problem in the next article.

Given that we’re assuming all objects that exist in each environment are the same, the only issue to consider is how to avoid deploying objects that were not intended for the target environment. There are a couple of possible reasons you’d want to avoid this:

- Dependencies on ‘exists in both but different’ objects mean some objects can’t be deployed in some environments

- It may not be possible to deploy certain functionality to certain environments, for geographic, legal or business/feature reasons

- Certain functionality may be part of a ‘pro’ version of the software, meaning it shouldn’t be deployed to clients who own the standard version

- Some custom objects might refer to private information regarding a particular client that is not appropriate or relevant to other clients

In Figure 4, let’s say we never want to deploy object A to Fluttershy, nor object E to Twilight Sparkle. We can achieve this simply using SQL Compare filters. We’ll save the filters in source control.

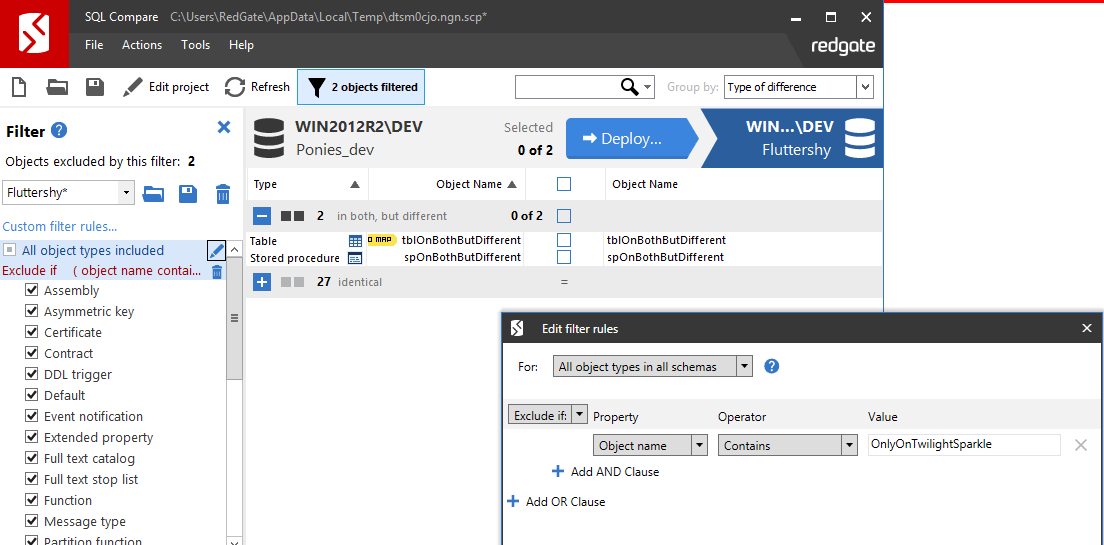

Fire up SQL Compare again and compare ponies_dev to Fluttershy. Create a filter to exclude all the objects that exist only in ponies_dev. Establishing a sensible naming convention may simplify your filters and help you to keep track of where objects are intended to be deployed.

Figure 5

Save a copy of this filter file as Fluttershy.scpf, since it’s a filter for deployments only to Fluttershy, and add it to source control in the directory Documents/Ponies_dev/filters. Next, compare ponies_dev to Twilight Sparkle, filter out objects that only exist in ponies_dev, call it TwilightSparkle.scpf and save it to the same directory.

Figure 6

Step 5 – Deploy the database changes

Now you can build or deploy this database from source control using the SQL Compare GUI, the SQL Compare command line or DLM Automation. If you reference the appropriate filter file, you will end up with a database that has only the objects appropriate for that environment.

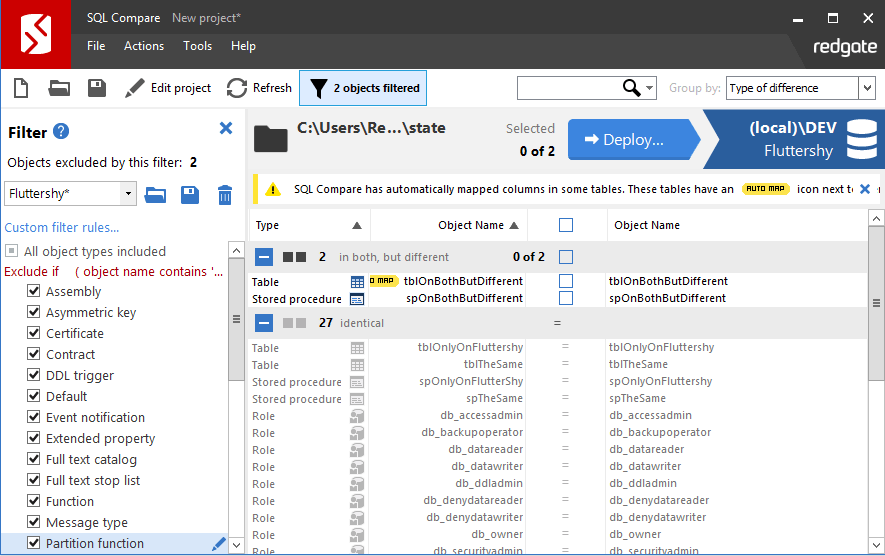

Using SQL compare GUI

You can simply deploy the changes to each environment directly from the SQL Compare GUI (full docs here) by referencing your filter file. Figure 7 shows deploying from master to Fluttershy, referencing the appropriate filter file. Remember that if some objects exist in both target and master but are different, these will be overwritten. Solving this problem will be explained in my next article.

Figure 7

Using the SQL Compare command line

A much better plan is to automate the deployments using the command line version of SQL Compare (full docs here). After all, our ultimate goal here is to beat a path back to automated, reliable deployments to all environments. We can use the SQL Compare command line tool in a batch script, or call it from PowerShell. Feodor Georgiev’s article explains how to do that. For our example, the command (free from the niceties of error handling, logging and so on), would look like something this:

|

1 2 |

sqlcompare /scr1=Documents/Ponies_dev/state /db2=TwilightSparkle /ftr=Documents\Ponies_dev\filters\TwilightSparkle.scpf /sync |

Using DLM Automation PowerShell cmdlets

Probably the ideal way to automate the deployment, however, is using the PowerShell cmdlets that come with DLM Automation, which comes with the SQL Toolbelt (full docs here). For example, the DLM Automation cmdlets can be used in combination with NuGet packages and release management tools to facilitate full blown release management with fancy change reports, approval gates and production drift alerts. For now, however, the following script will carry out a straightforward sync using auto-generated code:

Conclusions and next steps

This article started to tackle the problem of deploying different versions of the same database from a single source. We created a ‘master’ development copy of the database in source control, which contained all the objects. We set up SQL Compare filters to ensure that only the intended objects were deployed to each target environment.

The solution, so far, assumes all objects that exist in both the source and the target environment are identical. In my next article, I show how to use post-deployment scripts to deal with deployments where code objects are different in source and target, to avoid causing unintentional changes to a target object, which could result in failed deployments or even worse, data loss. The third in the series shows how to build multiple database versions from the same source using pre-deploy migration scripts.