Creating large complex objects exacts a toll on computing resources. When these objects can be shared, skipping their recreation becomes an enviable performance goal. Over the years, many solutions have come to the fore for caching objects. When all the consumers reside on the same physical machine, a not so well-known option, .NET’s MemoryMappedFile, may deliver a performance boon.

This article discusses a few MemoryMappedFile concepts as well as implements a simple caching application using it.

Cache Concerns

Caching objects for multiple concerns is not a new idea. The goals are simple: avoid recreating an object and ensure it can be shared. Several well-known caching solutions exist, such as memcached and redis, that accomplish these objectives. They also suffer from similar performance challenges – serialization and network throughput.

Serializing and deserializing objects into a generic format amendable to most caching technologies, such as BSON, JSON or XML, consume considerable time and computing resources. Passing the properly formatted objects between nodes requires bandwidth and time.

What if your caching needs are local to one node? For example, imagine a server hosting several web applications and Windows Services using the same catalog object. Why not just create the catalog once and share it via files or Interprocess Communications (IPC)? You then minimize the impact of the more common caching performance culprits.

It turns out that .NET provides the required magic for constructing a local, high-performance cache which this article will now explore.

Memory-Mapped Files

Memory-mapped files are not new. For over 20 years, the Windows operating system allowed applications to map virtual addresses directly to a file on disk thereby allowing multiple processes to share it. File-based data looked and, more importantly, performed like system virtual memory. There was another benefit. Memory-mapped files allowed applications to work with objects potentially exceeding their working memory limits.

For much of their history, memory-mapped files suffered from a problem: they required unmanaged code. .NET 4.5 changed that; the new System.IO.MemoryMappedFiles namespace simplified mapping of files to an application’s logical address space. Maybe even more astonishingly, it did so with only a few significant classes.

- MemoryMappedFile – representation of a memory-mapped file

- MemoryMappedFileSecurity – permissions for a memory-mapped file

- MemoryMappedViewAccessor – randomly accessed view of a memory-mapped file

- MemoryMappedViewStream – representation of a sequentially accessed stream (alternate to memory-mapped files)

Combining the performance needs of a local caching mechanism with the capabilities of memory-mapped files promises an auspicious marriage. The next discussion explores one such solution.

Basic Implementation

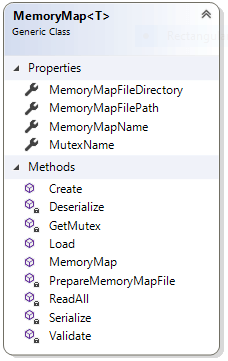

This demonstration revolves around one generic class, MemoryMap. It supports a cache that allows for creating and loading a serializable object. Its public face contains a few read-only properties and three public methods to achieve this end. The public methods are

- MemoryMap – constructor with a string parameter that serves as instance identifier

- Create – creates the memory-mapped file with the provide object data

- Load – returns the object stored in the underlying memory-mapped file

Solution Overview

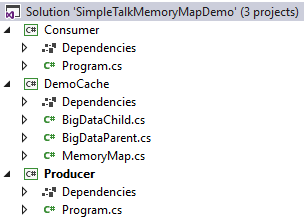

The Visual Studio 2017 solution, SimpleTalkMemoryMapDemo.sln, contains three .NET Core 2.0 projects as shown below. The source code is available on GitHub.

There are three projects in the solution:

- DemoCache – class library containing the earlier noted MemoryMap class along with two plain old CLR object (POCO) classes, BigDataChild and BigDataParent, for sharing.

- Producer – console project referencing DemoCache which creates, loads and reads a BigDataParent.

- Consumer – console project referencing DemoCache which loads an instance of BigDataParent.

NOTE: To improve code readability, using statements were omitted and full class names skipped. The source code includes the required using statements.

MemoryMap

Leveraging the memory mapped file cache begins with instantiating an instance of MemoryMap. The required memoryMapName parameter serves several functions. It defines the MemoryMappedFile instance along with the supporting Mutex and file.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

public string MemoryMapName { get; } public string MemoryMapFileDirectory { get; } public string MemoryMapFilePath { get; } public string MutexName { get; } public MemoryMap(string memoryMapName) { Validate(memoryMapName); MemoryMapName = memoryMapName; MutexName = $"{MemoryMapName}-Mutex"; MemoryMapFileDirectory = "c:\\temp"; MemoryMapFilePath = Path.Combine( MemoryMapFileDirectory, memoryMapName); } |

Once instantiated, working with a MemoryMappedFile begins with Create.

|

1 2 3 4 5 |

public void Create(T data) { if (!data.GetType().IsSerializable) throw new ArgumentException( "Type is not serializable.", nameof(data)); |

It’s important to remember that the data parameter must be serializable. Otherwise, Create will very quickly inform you via an exception. The next line calls upon the insightfully named private method Serialize to convert the data into bytes. This and the other helper methods will be explored later on in the discussion.

|

1 |

var objectBytes = Serialize(data); |

Outputting number of bytes data consumed was not required, but aren’t you curious?

|

1 2 |

Console.WriteLine( $"Save data's byte count: {objectBytes.Length.ToString("N0")}"); |

Since the underlying MemoryMappedFile object could be accessed by different threads and processes, the best way to minimize pitfalls is by restricting access via an interprocess synchronization primitive. GetMutex, another helper, does the work.

|

1 |

var mutex = GetMutex(MutexName); |

Some prophylaxis is required before enlisting the MemoryMappedFile’s underlying physical file.

|

1 |

PrepareMemoryMapFile(MemoryMapFileDirectory, MemoryMapFilePath); |

Finally, the moment has arrived – the actual creation of the MemoryMappedFile. It is worth noting that the below implementation is only one of many possible implementations. Whatever the implementation, though, a MemoryMappedViewStream is required to persist bytes.

|

1 2 3 4 5 6 7 8 9 10 11 |

using (var memoryMappedFile = MemoryMappedFile.CreateFromFile( MemoryMapFilePath, FileMode.CreateNew, MemoryMapName, objectBytes.Length)) { using (var memoryMappedViewStream = memoryMappedFile.CreateViewStream()) { memoryMappedViewStream.Write(objectBytes, 0, objectBytes.Length); } } mutex.ReleaseMutex(); } |

Accessing a MemoryMappedFile instance’s data constitutes is the job of Load. Unsurprisingly, it parallels Create except it’s now reading bytes and focused on deserializing them.

|

1 2 3 4 5 6 7 8 9 10 11 |

public T Load() { using (var memoryMappedFile = MemoryMappedFile.CreateFromFile( MemoryMapFilePath, FileMode.Open, MemoryMapName)) { T obj; var mutex = GetMutex(MutexName); using (var memoryMappedViewStream = memoryMappedFile.CreateViewStream()) { var binaryReader = new BinaryReader(memoryMappedViewStream); |

Two helpers, ReadAll and Deserialize, convert the byte array read from the memoryMappedViewStream object with a .NET BinaryReader.

|

1 2 3 4 5 6 7 |

obj = Deserialize(ReadAll(binaryReader)); } mutex.ReleaseMutex(); return obj; } } |

Before leaving the discussion of the core MemoryMap methods, it’s nice to know that a file-based store is not the only option for a cache. System.IO.MemoryMappedFiles also supports a memory-based store instead of physical files. This alternative, MemoryMappedViewStream, which is not explored in this article, offers its own pluses and minuses. For example, while faster, it is comparatively limited in size and requires an active process to keep it alive.

Time to consider the lowly helpers facilitating Create and Load.

Validate

MemoryMap leans heavily on the memoryMapName parameter with which it is instantiated. For example, MemoryMappedFile objects demand a physical file and it’s included in the path. Therefore, the Validate method tries to safeguard that memoryMapName will satisfy Windows’ file naming expectations.

|

1 2 3 4 5 6 7 8 |

private static void Validate(string memoryMapName) { if (string.IsNullOrWhiteSpace(memoryMapName)) throw new ArgumentNullException(nameof(memoryMapName)); if (memoryMapName.IndexOfAny(Path.GetInvalidPathChars()) > 0) throw new ArgumentException( $"{memoryMapName} contains invalid characters."); } |

The importance of memoryMapName goes beyond file naming. As you will soon see, MemoryMap uses it for managing locks and accessing MemoryMappedFile instances.

File Hygiene

Since MemoryMap saves its data to a physical file, it is important to ensure it can do so without issue. That translates into checking that a directory exists, and the file does not.

|

1 2 3 4 5 6 7 8 |

private static void PrepareMemoryMapFile( string fileDirectory, string filePath) { if (!Directory.Exists(fileDirectory)) Directory.CreateDirectory(fileDirectory); if (File.Exists(filePath)) File.Delete(filePath); } |

The first check ensures that C:/temp exists. The second deletes any preexisting version of the physical file with the same name.

Managing Contention

Avoiding conflict and corruption with multiple data readers and writers demand attention. This application leverages Mutexes in a fashion some readers might find worthwhile, even in a production implementation.

Two points merit mention. The use of the perennial C# favorite lock keyword is not adequate. It only handles multiple threads within the same process. MemoryMap must manage sharing conflicts between multiple processes on the server. Naming the mutex instance is the other key idea. Doing so exposes it to all processes on the operating system.

Warning: Mutex naming demands thoughtful consideration. For example, if names aren’t unique one mutex could unintentionally lock unrelated resources.

GetMutex helper handles the creation of a mutex. For our purposes, that only occurs when loading and retrieving data. If other avenues to the data existed, such as, update and delete methods, the same basic logic should suffice.

|

1 2 |

private Mutex GetMutex(string mutexName) { |

The first condition handles the possibility that there may NOT be a mutex. In both cases though, the code locks via WaitOne until the desired named mutex becomes available.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

if (Mutex.TryOpenExisting(MutexName, out Mutex mutex)) { mutex.WaitOne(); } else { mutex = new Mutex(true, MutexName, out bool mutexCreated); if (!mutexCreated) mutex.WaitOne(); } return mutex; } |

Waiting for a lock to clear is not without drawbacks. I doubt a production ready caching solution will find that very satisfying, a subject discussed later in the article.

Reading & Writing Data

When working thru MemoryMappedFile mechanics, it is easy to forget the importance of reading and writing the data. Any solution’s success hinges on its implementation. This one, once again, takes a simple, albeit understandable tack.

First, MemoryMap converts a serializable object of type T to an array of bytes for writing to a file. Serialize relies upon .NET’s BinaryFormatter as shown below. Such ease of use comes at a price, though. For example, it’s limited to about 6 MB of bytes; acceptable for demonstration purposes not so much for production.

|

1 2 3 4 5 6 7 8 9 |

private static byte[] Serialize(T obj) { using (var memoryStream = new MemoryStream()) { var binaryFormatter = new BinaryFormatter(); binaryFormatter.Serialize(memoryStream, obj); return memoryStream.ToArray(); } } |

Reading the data from MemoryMappedFile requires two helpers. ReadAll obtains the object’s raw bytes in chunks from the .NET BinaryReader.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

private static byte[] ReadAll(BinaryReader binaryReader) { const int bufferSize = 4096; using (var memoryStream = new MemoryStream()) { byte[] buffer = new byte[bufferSize]; int count; while ((count = binaryReader.Read(buffer, 0, buffer.Length)) != 0) memoryStream.Write(buffer, 0, count); return memoryStream.ToArray(); } } |

Deserialize mirrors Serialize. Except this time, it converts a byte array to an object of type T.

|

1 2 3 4 5 6 7 8 |

private static T Deserialize(byte[] data) { using (var memoryStream = new MemoryStream(data)) { var binaryFormatter = new BinaryFormatter(); return binaryFormatter.Deserialize(memoryStream) as T; } } |

Trying It Out

You can experiment with the demonstration caching solution via two console applications. The first, Producer, runs a complete use case of creating test data, BigDataParent, loading it into cache, and then reading it from cache. The second, Consumer, assumes the data already exists and only loads it.

The Producer project contains the code creating the test data via the CreateBigDataParent helper as shown below.

|

1 2 3 |

private static BigDataParent CreateBigDataParent(int count) { var random = new Random(); |

Admittedly, what adding randomized BigDataChild objects lacks in realism, it hopefully makes up in demonstration value. Readers may find experimenting with BigDataChild and BigDataParent an easy way to test out their changes to MemoryMap.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

var bigDataChildList = new List<BigDataChild>(); for (var i = 0; i < count; i++) bigDataChildList.Add( new BigDataChild { Id = random.Next(0, 100), SomeDouble = random.NextDouble(), SomeString = string.Empty.PadLeft(random.Next(1,1000), 'x') } ); return new BigDataParent { Description = $"BigDataParent with {count} BigDataChild", BigDataChildren = bigDataChildList }; } |

One Process

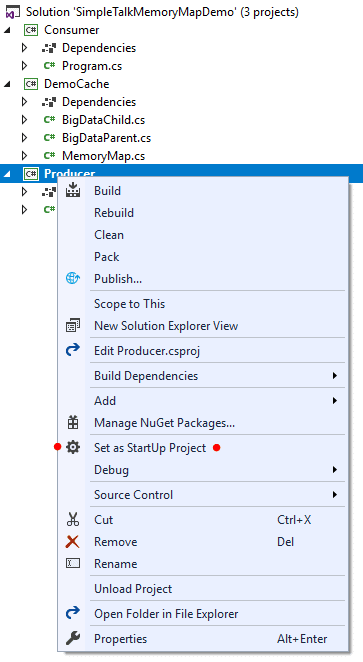

The solution allows for testing MemoryMap within a single process by setting the Producer project to the solution’s startup as shown below.

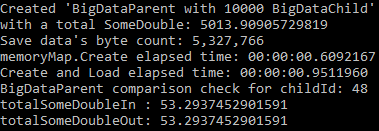

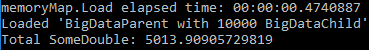

Clicking the debug button or F5 should produce output similar to that shown below. Exiting debug requires clicking any key in the console.

Inspecting the output suggests that the handiwork was not in vain. Not only do the ‘in’ and ‘out’ objects match based on the total of SomeDouble values (53.2937452901591) for children with the same id, but caching required less than a second to handle 5 megabytes of data.

The code creating the above resides in the Program.cs Main method.

|

1 2 |

static void Main() { |

The demonstration begins with creating a test object for loading into MemoryMap.

|

1 2 3 4 5 |

var sampleBigDataIn = CreateBigDataParent(10000); Console.WriteLine( $"Created '{sampleBigDataIn.Description}'"); Console.WriteLine($"with a total SomeDouble: {sampleBigDataIn.BigDataChildren.Sum(x => x.SomeDouble)}"); |

Since performance drives this effort, it seems like a good idea to check timing to see how long it takes to manage sampleBigDataIn.

|

1 2 |

var stopWatch = new Stopwatch(); stopWatch.Start(); |

As most readers probably guessed from the earlier discussion, the SomeKey parameter for the MemoryMap constructor uniquely defines it in this server. It literally serves as the key to this specific instance of a BigDataParent object.

|

1 2 3 4 |

var memoryMap = new MemoryMap<BigDataParent>("SomeKey"); memoryMap.Create(sampleBigDataIn); Console.WriteLine( $"memoryMap.Save elapsed time: {stopWatch.Elapsed}"); |

In a real-world application, code located elsewhere requiring the test object would be executing at this point, but, for the purposes of this demo, just retrieving sampleBigDataOut now plays best for the demonstration.

|

1 2 3 |

var sampleBigDataOut = memoryMap.Load(); Console.WriteLine($"memoryMap.Load elapsed time: {stopWatch.Elapsed}"); stopWatch.Stop(); |

The final several lines serve to help prove what went into MemoryMap<BigDataParent>(“SomeKey”) came out.

|

1 2 3 4 5 6 7 8 |

var childId = sampleBigDataIn.BigDataChildren[0].Id; Console.WriteLine($"BigDataParent comparison check for childId: {childId}"); var totalSomeDoubleIn = sampleBigDataIn.BigDataChildren .Where(x => x.Id.Equals(childId)).Sum(x => x.SomeDouble); Console.WriteLine($"totalSomeDoubleIn : {totalSomeDoubleIn}"); var totalSomeDoubleOut = sampleBigDataOut.BigDataChildren .Where(x => x.Id.Equals(childId)).Sum(x => x.SomeDouble); Console.WriteLine($"totalSomeDoubleOut: {totalSomeDoubleOut}"); |

Finally, exit Main from the console window.

|

1 2 |

Console.ReadKey(); } |

The next scenario simulates how another process might access the instance of BigDataParent just created.

Two Processes

After running Producer, switching the startup project to the Consumer project and executing it simulates accessing the cached a BigDataParent object from a second process. The output of the Consumer project’s Main method allows for a simple check that you have in fact loaded the correct data.

Comparing the Producer and Consumer output total SomeDouble values of 5013.90905729819 suggest the projects are sharing the same instance of BigDataParent.

|

1 2 3 4 |

static void Main() { var stopWatch = new Stopwatch(); stopWatch.Start(); |

Again, how you instantiate MemoryMap matters. The text SomeKey uniquely defines the instance.

|

1 2 3 4 5 6 7 8 |

var memoryMap = new MemoryMap<BigDataParent>("SomeKey"); var sampleBigData = memoryMap.Load(); Console.WriteLine( $"memoryMap.Load elapsed time: {stopWatch.Elapsed}"); Console.WriteLine( $"Loaded '{sampleBigData.Description}'"); Console.WriteLine( $"Total SomeDouble: {sampleBigData.BigDataChildren.Sum(x => x.SomeDouble)}"); |

As before, exit Main from the console window.

|

1 2 |

Console.ReadKey(); } |

Before declaring this two-process demonstration complete, discerning readers may rightfully claim that they were not concurrent. To them, I’d recommend experimentation with running simultaneously multiple instances of the demonstration solution.

Going Forward

Crafting a local custom memory mapped file-based caching solution demands vigilance. Constantly asking oneself with each feature whether or not an existing, full featured solution constitutes a better investment of developer time is time well spent. With that warning in mind, several MemoryMap enhancements seem likely for different production use cases.

Updates

As currently coded, MemoryMap equates to a read-only cache. That shortfall could be easily addressed by adding an update method. The below method signatures suggest a few possible implementations.

|

1 2 3 |

public void Update(T data) public void Update(T data, bool overwrite) public bool TryUpdate(out T data) |

The big challenge facing a developer, is whether to simply overwrite the entire object or just the differences. While working with ‘changes only’ may prove faster, it also demands careful byte accounting.

Key Management

MemoryMap manages instances with a user provided string in the constructor. While intuitive for demonstration purposes, it lends itself to errors. For example, allowing any value for the what purports to be the same instance almost guarantees different instances between users.

Requirements will likely drive the design of some form of key management. For simple use cases, names based on shared constants as shown might work well enough.

|

1 2 3 4 5 6 |

public class KeyNames { public const string Users = "Users"; public const string CurrentCatalog = "CurrentCatalog"; public const string ContactEmails = "ContactEmails"; } |

One intriguing possibility might be caching keys within its own MemoryMap instance. (OK, I digress. Didn’t I say it’s all about requirements?)

Locking

The employed locking scheme to share resources is blunt. Opportunities exist for enhancing it, such as applying some form of write-only locking. Developers experienced with multithreaded applications, though, might rightfully get nervous with gratuitous cleverness.

File Management

Let’s be honest, saving data to a temp directory on the root drive is not too clever. Expect any production version of MemoryMap to explore other, more robust, secure, enterprise-specific options. For example, deleting orphan data files on permanent server instance strikes one as an inevitable feature.

Serialization

How MemoryMap manages serialization constitutes the biggest implementation challenge for any practical caching solution. The sample in this article relied on the somewhat limited, albeit easy to use, .NET BinaryFormatter for writing and reading data to a file. It is not inconceivable, though challenging, to read and write individual bytes to overcome such limitations. Likewise, maybe the performance benefits of working with binary data are not as important as easily serializing large objects with JSON or some other string-based serialization technology.

Somewhat related to enhancing serialization is object version management. While not generally a concern for most caching solutions, the customized nature of MemoryMap lends itself to version checking if the need exists.

Time to Live (TTL)

Most caching solutions include some form of expiring cached contents. Implementing such a feature does not ask any significant questions. Just add a date time check and voila. The challenge becomes cleaning up supporting file-based resources as noted above when file management was discussed.

Conclusion

The sample caching application discussed in this article demonstrated a .NET MemoryMappedFile based solution for efficiently sharing objects on the same node. It highlighted the basic mechanics and concerns for building a real-world version. Possibly the biggest challenge facing a developer might be deciding which features to add and when the totality of such additions suggest bypassing performance concerns and employing an existing well-known caching solution.

Load comments